Revisiting the vCider SDN Solution Prior to Cisco Acquisition : I never really got a chance to know what vCider brought to the menu of network industry disruption prior to their recent acquisition by Cisco. Matt Nabors @itsjustrouting and I were talking about vCider the other day and I realized I never knew much about them other than some general concepts and went to check out there site and blog and saw the content had been removed. Matt has been bearish and ahead on thought leadership on the topic of SDN, when one would be scoffed at or jumped on from the tech bullies. I think the idea of revisiting some long in the tooth practices is beginning to catch on which is great for those of us held accountable for their performance and availability. So I wanted to revisit what little I remember and post the web mirrors that I didn’t read enough of. Chris Marino one the co-founders wrote quite a bit on his thoughts around the emerging SDN opportunities and some interesting insights from a VC.

I know companies are working on them. Cisco, VMware for sure. BigSwitch Networks has been talking about these kinds of applications for a while now and Nicira is one of the major contributors to OpenStack’s Quantum Network API. It seems to me that a standard Quantum-like API would be more valuable toward achieving the objectives of a SDN, than OpenFlow. -Chris Marino, vCider

vCider Overview

My two cent overview from what I remember vCiders technology being was a completely software delivered solution. There is a vCider driver installed that builds a mac in UDP tunnel. The Tunnel Endpoint (TEP) then registers with at the vCider website to pick up its TEP to IP mapping. Before I reinvent the wheel on explaining this Ivan Pepelnjak did a great writeup and a video explanation in his cloud networking webinar. Greg Ferro also did an excellent writeup. Between those two it answered most of my questions.

Big vendors can sit back and let the smaller companies innovate with creative network applications that take advantage of OpenFlow enabled devices, or they can build them themselves. The really smart ones are not only going to develop their own applications, but also get in front of all this and make their devices the preferred platform for everyone’s network applications. It will be interesting to watch which vendors choose which path. The path they take could very well shape the industry for years to come. -Juergen Brendel, vCider

vCider is the big company now, that means they will need to inject itself into the vast portfolio of products and presumably integrate into the onePK SDN vision Cisco is developing. Cisco has quite a few announcements recently and presumably upcoming that can allow for some connecting of dots for a preview of an SDN data center strategy with splashes of cloud, fabric, tunnels, orchestration and stacks. vCider’s focus on hybrid cloud integration is progressive. I pondered similar questions a while back in Public Cloud NaaS. vCider innovation isn’t in the data plane tunneling but the orchestration of where TEPs land and presumably wrapping some policy in those TEPs.

All of this has interesting implications to current partnerships. SDN in the Data Center has had so much focus due to the ever-growing earnings potential in the data center and the ability to avoid the hardware constraints presented in today’s silicon.

I enjoyed going back and reading the posts and documentation from vCider, so I thought I would post some of the content that I found interesting. Chris Marino and Juergen Brendel are both named authors in the posts. He is bullish on software and seemingly less so on some aspects of SDN. As you can see from the vCider.com the content was taken down with a teaser about OpenStack integration focus.

The vCider content is all viewable from web caches, nothing secretive, so if there is a post I didn’t put up that you were interested in reading you can find the cache. So if you missed it the first time around, here you go! To reiterate nothing below here is my content merely web cache being reposted so folks can enjoy some nice diagrams and commentary.

vCider Cache Begins

Virtual Private Cloud Frequently Asked Questions

Virtual Private Clouds

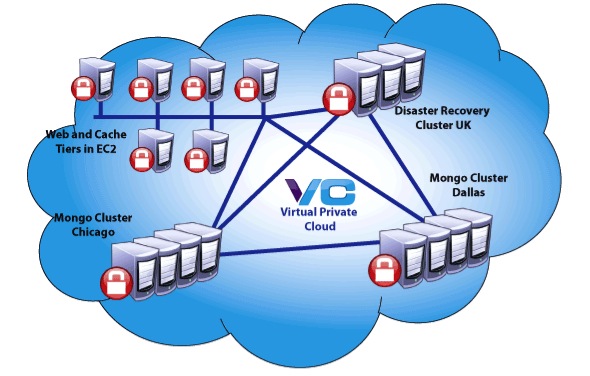

1. What is a Virtual Private Cloud?

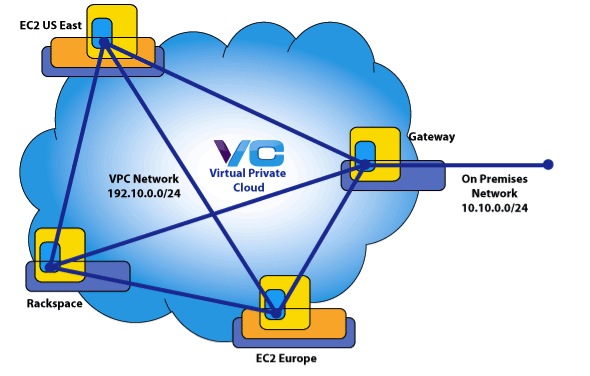

A Virtual Private Cloud (VPC) is a private network that you control and manage but that a least partially runs outside your enterprise data center, typically on one or more public cloud platforms such as Amazon EC2 or Rackspace. A VPC enables ‘hybrid cloud’ computing since it provides a seamless extension of your corporate LAN into the pubic cloud, while remaining secure. A VPC provides a mechanism by which public cloud resources can be isolated and secured on the public cloud via their own ‘virtual network’ as well as a way to attach this virtual network to the enterprise LAN.

2. Why do I need a VPC?

Three reasons: flexibility, security, and cost.

A VPC enables you to securely connect whatever cloud or on-premises systems you like in a fast private network. Want to span regions or cloud platforms to support HA architectures or simply to choose best-of-breed providers? That’s almost impossible to do without a VPC. The automation provided by a VPC eliminates the need for manually configuring networks with OpenVPN or host-based firewalls. VPCs reduce IT labor costs while improving flexibility and efficiency.

Bottom line: Anyone who wants to use public cloud infrastructure for applications that require secure communications among cloud resources and/or needs to extend or access a corporate data center requires a VPC.

3. Why can’t I simply use a VPN?

A VPN supports a narrow use case of a VPC. A VPN typically provides a point-to-point encrypted tunnel for secure communications. A VPC provide secure communications among all systems in the VPC. To accomplish this with a VPN would require a full mesh of point-to-point tunnels, creating significant and on-going administrative overhead. This is especially burdensome when considering the dynamic nature of typical cloud-based applications.

4. Doesn’t my IaaS provider offer this kind of VPC capability? How is vCider different?

Most IaaS providers offer some kind of VPC or VPN solution so that the provider’s resources can access on-premises systems.

However, vCider is unique in that it offers a way to build your own VPC across IaaS providers (e.g., across Amazon and Rackspace, or Amazon and Terremark, or Amazon, Rackspace, and Terremark). Also, the vCider VPC is build on a switched layer 2 virtual network. This virtual network supports familiar enterprise LAN capabilities such as multicast, IP failover, etc.

Increasingly, cloud applications are deployed across more than one IaaS provider to avoid service outages and to support disaster recovery. Also, cloud users often wish to deploy parts of their application at different IaaS providers that offer price or performance advantages. Sometimes a provider might be physically located closer to end users or have some other unique advantage. Whenever they deploy their application across providers, the proprietary VPC/VPN solution is no longer an option. Cloud users need a universal solution for securely connecting cloud resources in a private network.

Finally, the vCider VPC offers fine-grained control over cloud cloaking exclusion rules for packet filtering. This lets users selectively open up specific ports to the public Internet. This is especially useful for public facing Web applications that run in a VPC. Web traffic can be accepted in on a specific system, while the rest of the network remains private and cloaked, invisible to malicious users.

Virtual Networks

1. What is a Virtual Network?

Simply stated, a virtual network is a network that you control, that runs on one that you don’t control. Virtual networks are independent and isolated from other networks and the hardware on which they run. Just as multiple virtual machines (VMs) can run inside a hypervisor on a single host, many virtual networks can run simultaneously on the same physical network.

2. What role does a virtual network play in a Virtual Private Cloud?

A virtual network is the foundation of a Virtual Private Cloud. In order to extend a data center into the cloud, at a minimum, the network in the public cloud needs to support the users own private IP addresses. The can only be achieved with a virtual network.

In addition, to secure communications between nodes within the VPC, all traffic must be encrypted. The most efficient place for this kind of encryption is in the network itself. A virtual network can provide this.

Finally, the vNet is the means by which the VPC isolates the cloud resources from the public internet. vCider achieve this isolation through a technique called ‘Cloud Cloaking’.

3. Can a vCider virtual network stretch across IaaS providers?

Yes. vCider’s virtual network technology lets users build virtual networks that span the cloud. Cloud resources that run in EC2 can be networked with systems that run in Rackspace Cloud or physical systems at a co-lo provider. As long as the systems can run the vCider software and have Internet access, the can be included in a virtual network. This connectivity is part of any vCider Virtual Private Cloud.

4. How to I connect my data center to the Virtual Private Cloud?

The virtual network built in the public cloud can be seamlessly attached to a corporate data center and LAN through the vCider Virtual Gateway.

The Gateway is a system on the virtual network that also has access to the physical network in the corporate data center. This typically is a physical machine that runs in the DMZ or behind an appropriately configured firewall. vCider automatically detects these external networks and presents to the subscriber the option of using that system as an access gateway. Once a system is specified as a gateway, vCider then will automatically configure routes on all the systems on the virtual network with routes to the gateway for access to the corporate LAN.

5. Can I use my existing VPN gateway hardware to connect to the VPC?

Yes. This simply requires that you set up a VPN connection to a system on the vCider virtual network, then configure that node as the vCider Gateway.

6. Can I put the physical servers in my data center on the virtual network?

Yes. Just like any virtual machine that’s running in the cloud, systems in your data center, once configured with vCider software, can join the virtual network and become part of the VPC. The physical machines in your data center would use an IP address on virtual network to communicate with systems outside of the data center.

7. Do I need to install any special hardware to use vCider?

No. vCider’s virtual networks are delivered on demand and can be configured directly through the configuration console. The only software required is a device driver and monitoring daemon.

8. What is Cloud Cloaking?

Cloud Cloaking is a security technique by which systems running in the public cloud are rendered invisible to the public Internet. By cloaking public cloud resources, vCider makes the attack surface of your cloud application disappear. With Cloud Cloaking no malicious traffic can access the systems. The only way to access the applications is via the private non-routable IP address across the virtual network. This effectively locks down all public cloud resources.

9. How is this different from a firewall that I run on my instances?

A host-based firewall that you load on your cloud systems can provide many important functions. However, the administrative burden that goes along with maintaining policies consistent across a set of elastic cloud systems can be overwhelming.

vCider’s VPC takes an entirely different approach. Rather than establishing firewall rules for access to cloud-based systems, a VPC lets users secure their systems with their existing enterprise security infrastructure.

Cloud cloaking locks down the systems from all external access; the virtual network lets you build network topologies and steer traffic flows exactly where you want them. This lets you build out cloud network topologies that include enterprise firewalls, access gateways and even IDS/IPS systems securing your cloud resources exactly the same as your internal systems.

Software

1. What software do I need to install in order to run a vCider VPC?

There is one download to be installed. This installs a user space process called the Network Monitoring Daemon (NMD) and a high-performance network device driver.

3. Do you offer it as an Amazon EC2 AMI?

No. However, please see our Download page for a list of standard AMIs that are compatible with our software. Any of these AMIs can be launched and our software installed directly.

4. What operating systems are supported?

vCider currently supports Linux 2.6.31 and above.

5. How big is it?

The NMD and device driver are about 20KB each. The download includes executables for all supported kernels which is why the download is about 1MB.

6. Is it available as open source?

7. How is this different from OpenVPN?

OpenVPN is a Virtual Private Network (VPN) solution that lets you connect a client and server over an encrypted tunnel. It is not a VPC.

vCider’s virtual network and OpenVPN share a number of characteristics, but are also different in many important ways. OpenVPN is an application that runs in user space and can not deliver the level of performance of the vCider kernel-resident device driver software. Also, administering a VPN between more than just a few systems is an enormous administrative burden that most users are unwilling to bear.

Beyond the performance limitations of OpenVPN, vCider additionally provides gateway functions and cloud cloaking technology to support cross provider Virtual Private Clouds.

8. What kind of encryption is being used?

Operations

1. How do I configure and manage my networks?

Subscribers have access to the vCider Configuration Console where they can create new virtual networks, connect them to their enterprise network and cloak them to become their own VPC.

2. Is there an API?

Yes. vCider has published an API that enables complete programmatic control over your VPC. Everything that can be done through the Configuration Console can also be done via the API. We also have open sources a client library that provides examples of how to use the API. More information is available at our GitHub repository.

3. Can I assign a node to more than one virtual network?

Yes. vCider supports multiple virtual network interfaces on systems. This allows systems to be assigned to more than one virtual network. Multiple interfaces lets users build specific network topologies that may be required for certain application deployments. In addition, cloud cloaking is designated on a per-network basis so supporting multiple interfaces on a system lets systems straddle the boundary between the VPC and external networks.

4. What kind of information is provided in the Management Console?

The Management Console reports node (system) status and availability, as well as a variety of network statistics including total throughput and packets per second for each node.

5. Are the packets modified in any way?

No. The vCider device driver on the system accepts fully formed Ethernet frames from the local network driver. From the destination MAC address in the Ethernet frame, it determines which physical system is running the destination virtual interface. It then encapsulates the unmodified frame in an IP packet and sends it to the indicated physical system. There, the receiving network driver delivers to the vCider device driver the IP payload, which is the original Ethernet frame. This unmodified frame is simply sent up to the application where it receives it, unaware of its true path.

6. How is vCider priced?

You can create a VPC with up to 4 systems (network ports) free. Beyond that, pricing starts at $100 per month for up to 16 ports. Contact vCider for pricing on larger configurations.

Performance

1. Are there any performance bottlenecks?

No. The system was designed so that nodes could communicate directly with each other. There is no intermediate device between any of the systems on the virtual network. Performance through the virtual network gateway is dependant on the performance of the system on which the software runs.

2. How much latency does the device driver introduce?

As compared with the native network interface packets experience very little additional delay. As compared to latency across the WAN between systems on the virtual network, the additional latency is negligible.

3. What about throughput?

Our Cassandra performance benchmarks indicate that throughput between vCider nodes is nearly identical to that of native network interfaces. If an application becomes I/O-bound, vCider has no impact on throughput. If an application becomes compute-bound, there could be a 2-4% impact on throughput.

4. Will it slow down my apps?

I first wrote about OpenFlow and Software Defined Networks a while back and there has been a lot of progress since then.

Back then I wrote:

Unfortunately… the problem of getting devices to support OpenFlow remains daunting. In fact, given the disruptive potential of OpenFlow I have serious doubt any major supplier will ever support it outside of proof of concept trials.

Given the recent announcements of products and demo gear from NEC, Marvell, Dell,Brocade, HP and others, my

earlier position might have to be revisited. That said, there still remains the delivering what I called ‘inter-controllable’ devices:

Its the external control of networks that I find so exciting. OpenFlow is just one technique for Software Defined Networks (SDN) that have the potential to revolutionize the way networks are build and managed. Clearly, virtual networks (VNs) are SDNs.

The vision of ONF is not only that the networks be interoperable, but that they also be inter-controllable. I remember Interop back in the late ’90s where the plugfests were the highlights of the conference. We take that for granted now, but there was a time when it wasn’t uncommon for one vendor’s router to be unable to route another vendor’s packets.

I’m going to spend some time in the OpenFlow Lab at Interop this week so I’ll be able to see first hand what is actually going on here.

With the OpenFlow buzz machine dialed up to 11, it was nice to read this interviewwith Kyle Forster, the co-founder of BigSwitch. It is, by far, the most thoughtful piece I’ve read so far on what OpenFlow is and where the opportunities lie. Regarding the disruptive potential of OpenFlow on the switch market he notes:

Look, if we’re using OpenFlow controllers and switches to do stuff that switches do today, this is going to commoditize switching. The real opportunity is to make the network do more than what it does today…

This is exactly right.

Big vendors can sit back and let the smaller companies innovate with creative network applications that take advantage of OpenFlow enabled devices, or they can build them themselves. The really smart ones are not only going to develop their own applications, but also get in front of all this and make their devices the preferred platform for everyone’s network applications.

It will be interesting to watch which vendors choose which path. The path they take could very well shape the industry for years to come.

Multi-cloud IP address failover with Heartbeat and vCider

In light of recent IaaS provider outages, it is easy to understand that organizations are hesitant to move critical infrastructure into the cloud. Yet, the flexibility and potential cost savings are too attractive to just dismiss. So, how can a responsible organization go about moving part of its network and server infrastructure into the cloud, without exposing itself to undue risk and without putting all eggs into a single IaaS provider’s basket?

The answer is to pursue a multi cloud strategy: Use more than one cloud provider. For example, instead of having all your servers with Amazon EC2, also have some with Rackspace. Or at least, have servers in multiple EC2 geographic regions. Ideally, configure one to be the backup for the other, so that in case of failure a seamless and automatic switch over may take place. This, however, is not made easy, due to proprietary management interfaces for each provider, and because traditional network tools often cannot be used across provider networks.

In this article, we will show how we can accomplish rapid failover of cloud resources, even across provider network boundaries. We use a simple example to show how to construct a seamless and secure extension of your enterprise network into the cloud, with built-in automatic failover between servers located in different cloud provider’s networks.

Setup and tools overview

For our example, we bring up two servers, one in the Amazon EC2 cloud, the other in the Rackspace data center. This demonstrates the point of being able to cross provider boundaries, but of course, if you prefer you could also just have a setup in multiple geographic regions of the same provider.

In addition, we use vCider’s virtual network technology to construct a single network – a virtual layer 2 broadcast domain – on which to connect the two servers as well as agateway that will be placed into the enterprise network. The gateway acts as the router between the local network and the virtual network in the cloud, securely encrypting all traffic before it leaves the safety of the corporate environment.

Finally, we use Linux-HA’s Heartbeat to configure automatic address failover between those servers in the cloud, in case one of them should disappear. The IP address failover facilitated by Linux-HA requires the cluster machines to be connected via a layer 2 broadcast domain. This rules out deployment on IaaS providers like Amazon EC2 and others, which do not offer any layer 2 networking capabilities. However, as we will see, it works perfectly fine on the layer 2 broadcast domain provided by vCider.

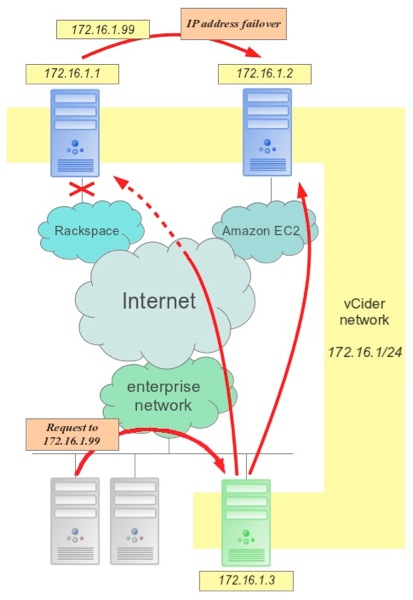

The following graphic summarizes the configuration:

Figure 1: Seamless IP addresses failover of cloud based resources: Client requests continue to be sent to a working server.

In figure 1, we can see an enterprise network at the bottom, with various clients issuing requests to address 172.168.1.99. This address is a “floating address”, which may fail over between the two servers at Rackspace and Amazon EC2. The gateway machine (in green) is part of the enterprise network as well as the vCider network and acts as router between the two. We will see in a moment that the address failover is rapid and client request continue to be served, without major disruption.

Step 1: Constructing the virtual network

After bringing up two Ubuntu servers, one in the Amazon EC2 cloud and one at Rackspace, we now construct our virtual network. If you do not yet have an account with vCider, please go here to create one now.

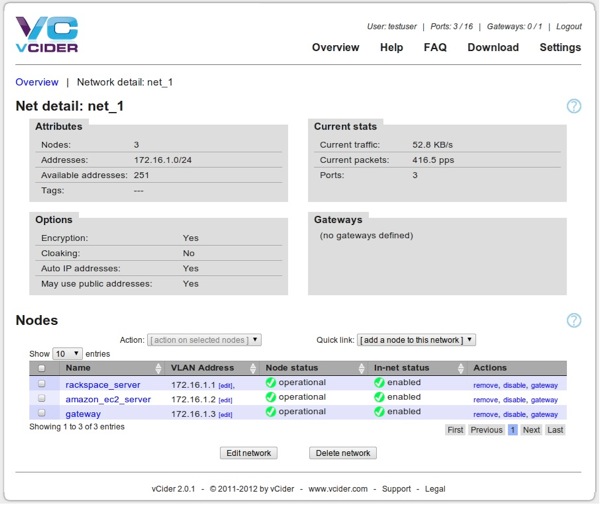

Go to the download page to pick up our installation package and follow the instructions there. In our example, we install it in two cloud based nodes and in one host within our enterprise network. We configure them into a single virtual network with the 172.168.1/24 address range. After we are done, the network looks like this in the vCider control panel:

Figure 2: The vCider control panel after the nodes have been added to the virtual network.

We can see here that virtual IP addresses have been assigned to each node, which can already start to send and receive packets using those addresses.

Step 2: Configuring IP address failover (installing Heartbeat)

Our floating IP address will be managed by Linux-HA’s Heartbeat, a well established and trusted solution for high-availability clusters with server and IP address failover. Thanks to vCider’s virtual layer 2 broadcast domain, Heartbeat can finally also be used in IaaS provider networks that do not natively support layer 2 broadcast.

Heartbeat requires a little bit of configuration. Let’s go through it step by step:

Add your hosts to the /etc/hosts file and change hostname

First, add these two entries to your /etc/hosts file on BOTH your server hosts:

|

1 2 3 4 |

172.16.1.1 rackspace_server 172.16.1.2 amazon_ec2_server |

This allows us to configure Hertbeat by referring to the cluster nodes via easy to remember server names. Please note that we are using the vCider virtual addresses here. Now change the hostname on each node via the hostname command:

|

1 2 3 |

$ hostname rackspace_server |

Do the same on the Amazon EC2 server, respectively.

Install Heartbeat

On your Rackspace and Amazon EC2 server, install Heartbeat:

|

1 2 3 |

$ sudo apt-get install heartbeat |

On the Rackspace server, create the configuration file /etc/ha.d/ha.cf with the following contents:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 |

logfacility daemon keepalive 2 deadtime 15 warntime 5 initdead 120 udpport 694 ucast vcider0 172.16.1.2 auto_failback on node amazon-ec2-server node rackspace-server use_logd yes crm respawn |

Note that we are listing both our cluster nodes by name and refer to the IP address of the other node, as well as ‘vcider0′, the name of the vCider network device. For more details about the Heartbeat configuration options, please refer to Heartbeat’sdocumentation.

On the second node, the Amazon EC2 server, create an exact copy of this file, except that the ‘ucast vcider0′ line should refer to the IP address of the Rackspace server. like so:

|

1 2 3 |

ucast vcider0 172.16.1.1 |

We now need to establish the authentication specification for both cluster nodes, so that they know how to authenticate themselves to each other. Since all communication on a vCider network is fully encrypted and secured, and since with vCider we can easily cloak our network from the public Internet, we use a simplified setup here, which saves us the creation and exchange of keys. Please create the file/etc/ha.d/authkeys on both cluster nodes, with the following contents:

|

1 2 3 4 |

auth 1 1 crc |

Then set the permissions on these files:

|

1 2 3 |

$ sudo chmod 600 /etc/ha.d/authkeys |

Heartbeat can now be started (on both nodes):

|

1 2 3 |

$ sudo /etc/init.d/heartbeat start |

Configure Heartbeat

Heartbeat comes with its own configuration command:

|

1 2 3 |

$ sudo crm configure edit |

In the text editor, you will see a few basic lines. Edit the file, so that it looks something like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

node $id="923cbacf-00af-4b6c-a8ca-2e4aae780038" amazon-ec2-server node $id="aca44dea-e6f8-4c4c-94ab-087568c32e36" rackspace-server primitive ip1 ocf:heartbeat:IPaddr2 \ params ip="172.16.1.99" nic="vcider0:1" \ op monitor interval="5s" primitive ip1arp ocf:heartbeat:SendArp \ params ip="172.16.1.99" nic="vcider0:1" group FailoverIp ip1 ip1arp order ip-before-arp inf: ip1:start ip1arp:start property $id="cib-bootstrap-options" \ dc-version="1.1.5-01e86afaaa6d4a8c4836f68df80ababd6ca3902f" \ cluster-infrastructure="Heartbeat" \ expected-quorum-votes="1" \ stonith-enabled="false" \ no-quorum-policy="ignore" |

In particular, make sure that your two cluster nodes are mentioned and that you define the two ‘primitives’ for the IP address and the IP failover, which mention our floating IP address 172.16.1.99. Also note the definition of the ‘FailoverIp’ group and the ‘order’. Use the crm_mon command to ensure that Heartbeat reports both cluster nodes in working order.

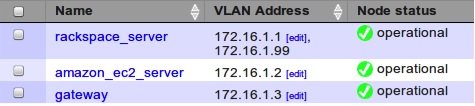

Within a few seconds, you will notice that Heartbeat has configured the floating address on one of the cluster nodes. You can see it as an alias on the vcider0 network device when using the ifconfig command. This is also reflected in the vCider control panel:

Figure 3: Heartbeat has configured the floating IP address on one of the cluster nodes, which is now shown in the vCider control panel.

Allowing gratuitous ARP to be accepted

A so-called ‘gratuitous ARP’ packet is sent out by Heartbeat in case of an IP address failover. This packet updates the ARP cache on the gateway machine (and any other attached device, for that matter). The ARP cache is what allows a network connected device to translate an IP address to a local layer 2 address, which is needed for delivery of packets in the local network. Normally, an ARP request is sent by a host in order to learn a local machine’s MAC address. The response back then updates the ARP cache of the sender. A gratuitous ARP, however, is an unsolicited response, sent to the layer 2 broadcast address in the LAN and this seen by all connected devices. It updates everyone’s cache, even without them having to ask for it.

Acceptance of gratuitous ARP packets is disabled by default on most systems. Therefore, on our gateway machine, we need to switch it on. As root, issue this command:

|

1 2 3 |

# echo 1 > /proc/sys/net/ipv4/conf/all/arp_accept |

Dealing with send_arp

Normally, Heartbeat would be ready to go at this point. However, there is a a small problem with the ‘send_arp’ utility, which comes as part of Heartbeat. This utility is used during IP address failover to send the gratuitous ARP packet as a layer 2 broadcast to all other devices connected on the layer 2 network, in order to inform them about the new location of the floating IP address. A small bug in send_arp prevents it from working 100% correctly under all circumstances. The Heartbeat developers have recently fixed this issue, but that fix is not in all distros’ repositories yet. For example, Fedora 16 already uses the latest version by default, while Ubuntu 11.10 still does not have this fix. Therefore, to be absolutely sure, we simply replace send_arp with a similar utility, called ‘arping’. Just follow these steps:

|

1 2 3 4 |

$ sudo apt-get install iputils-arping # 'yum install arping' on RPM systems $ sudo mv /usr/lib/heartbeat/send_arp /usr/lib/heartbeat/send_arp.bak |

Create a new file in /usr/lib/heartbeat/send_arp and give it execute permissions. The content of this file should be:

|

1 2 3 4 5 6 7 8 9 10 |

#!/bin/sh ARPING=/usr/bin/arping REPEAT=$4 INTERFACE=$7 IPADDRESS=$8 $ARPING -U -b -c $REPEAT -I $INTERFACE -s $IPADDRESS $IPADDRESS |

Step 3: Testing our setup

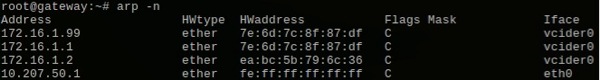

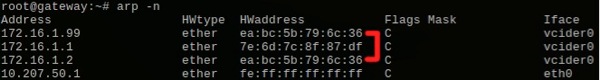

All is configured and in order now, so let’s test the failover. First, log into the gateway machine and have a look at the ARP cache, using the arp -n command:

Figure 4: The ARP cache on the gateway machine before failover.

We see that the MAC address (‘HWaddress’) for the floating IP address is the same as for 172.168.1.1, which in our example is the MAC address of the vCider interface on the Rackspace server. We will cause an IP address failover in a moment, by issuing a command to put one of the cluster nodes into standby mode. But before we do so, start a ping 172.16.1.99 either on the gateway node or on one of the enterprise clients. We will observe what it does during the failover.

While the ping is running, let’s log into any one of the cluster nodes in a different terminal and let’s take down the node that currently holds the floating IP address:

|

1 2 3 |

$ sudo crm node standby rackspace-server |

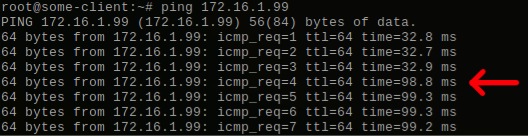

If you now take a look at the ongoing ping output, you see something like this:

Figure 5: Even during IP address failover, the floating IP address can still be reached.

The red arrow marks the moment at which we took down the first cluster node and Heartbeat moved the floating IP address over to the second node. Our gateway machine is located on the US east coast, the Rackspace server in Chicago, while the EC2 server is at the US west coast. Because the floating IP addresses failed over to a node that’s further away, the network round trip time naturally went up. The key takeaway point, however, is that the floating IP address remained accessible to clients, even as it failed over into the cloud of another IaaS provider!

To see what happened, we can take a look again at the ARP cache of the gateway:

Figure 6: The gateway’s ARP cache after failover.

In figure 6 we highlighted the new MAC address of the floating IP address, showing that it is now identical to the vCider interface’s MAC address of the second cluster node. As a result, all clients connected in the vCider virtual network are able to continue to access the floating IP address.

Replicating content

One aspect we have left out of this blog for brevity’s sake is the replication of content across the failover servers. To accomplish a transparent failover, these machines have to be able to serve identical content to clients. Naturally, the same server software needs to be installed and the content replication itself can be accomplished in a number of ways: Static file duplication, maybe via rsync, a full cluster setup of whatever application or database you are running, or even block level replication via DRBD or similar. This is a concept we hope to explore more in a future blog post.

Conclusion

Linux-HA with Heartbeat is a trusted, reliable high-availability cluster solution, which can ensure continuous availability of resources even in the face of server failure. Key to this functionality is the seamless, rapid update of everyone’s ARP cache via a gratuitous ARP packet, which is sent on the local network as layer 2 broadcast.

In cloud networks, and especially across geographic regions and providers, you do not have a local network and therefore, such an IP address failover is normally not possible. Without a layer 2 broadcast domain, Heartbeat is not able to update ARP caches in case of an IP address failover, which results in service interruption until the ARP cache entries finally time out, thereby restricting applicability of Linux-HA in cloud environments and limiting administrators’ ability to setup high-availability clusters.

Because vCider provides a true layer 2 broadcast domain, sending the gratuitous ARP is possible again, no matter where the nodes in the virtual network are located. Therefore, Linux-HA with Heartbeat can now be used to facilitate IP address failover, even across geographic regions or IaaS provider network boundaries.

Big, Flat Layer 2 Networks Still Need Routing

There’s a lot of talk these days about how the solution to all networking problems simply boils down to flattening the network and building Big Flat Layer 2 Networks (BFL2Ns). Juniper is one of the most explicit about their strategy to flatten the network, but all the vendors are making a big deal about they layer 2 strategies.

Big Flat Networks

The appeal of this approach is very compelling. Layer 2 is fast and supports plug-and-play administrative simplicity. Furthermore, some of the most compelling virtualization features including live VMotion require layer 2 adjacency (although there is some confusion on this point) and the single-hop performance it provides is critical for converged storage/data networks.

What you don’t read much about, though, is that there were some very good reasons to split layer 2 broadcast domains and most of those reasons have not gone away. The problems invariably involve some kind of runaway multicast or broadcast storm. Most network admins have experienced the frustration of struggling to figuring out the root cause of these kinds of floods.

When you stretch layer 2 over the WAN, you’re asking for trouble since this relatively low bandwidth link would be the first to go.

Part of the problem is the simplistic nature of how links are used at layer 2. The Spanning Tree Protocol (STP) prevents forwarding loops, but at the same time can funnel traffic into hotspots that can easily overwhelm a single link. TRILL, the proposed replacement as well as other vendor-specific technologies address some of these problems and are already part of vendors’ flat layer 2 roadmaps.

From a technical perspective, all this makes sense and big, flat layer 2 networks have the potential to deliver their promised goals. Nevertheless, I remain skeptical. But for reasons that are completely unrelated to the technology.

I’ve read about technical problems of big layer 2 networks based on the increased likelyhood of failure due to unintentional broadcast storms and other error conditions. While these risks are certainly real, I haven’t read anything that could not be solved with good engineering.

No, what troubles me about these kinds of networks is how they will be used and the expectation of those that deploy them. By this I mean, when you actually go and build one of these BFL2Ns, you’re very likely going to need to segment it into several Smaller, Nearly Flat Networks, bringing you back almost to where you started.

Why? Already, today’s smallish layer 2 networks are routinely segmented into VLANs. What people are looking for from these BFL2Ns is the flexibility not only to segment it into VLANs, but also to enable every endpoint to potentially gain access though any edge device. VMotion from anywhere, to anywhere is a simple way to think about this.

And this will be possible with a BFL2N simply by making every port a trunking port for every VLAN. But if you think about that for a minute, you’ll notice that once you do that, you’ve undermined the VLAN segmentation that you started with and have essentially built giant LAN.

Of course, if you don’t want that, then you’ll prune them, and restrict parts of your BFL2N from other parts by not trunking some VLANs certain places, and that should work just fine.

But don’t forget there is a name for this kind of networking: Routing.

Why Should I Care More About OpenFlow than Quantum?

That’s the question I want answered this week at theApplied OpenFlow Symposium.

I hope I get a good answer.

I was not able to attend last week’s Open Networking Summit, but since some of thepresentations are on YouTube already, I’m going to try to watch them all. I just finished watching Martin’s presentation and it provided a nice historical background on OpenFlow and a great perspective on how it fits in the broader vision of Software Defined Networking. I highly recommend it.

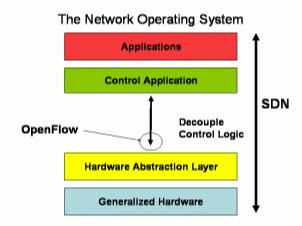

The slide that says it all is shown around 20:50. There Martin says:

OpenFlow is ‘a’ interface to the switch. And in a fully built system it’s of very little consequence. If you changed it, nothing would know.

I’ve recreated one of Martin’s slides here to show how he illustrated this. As you can see SDNs can be implemented through a variety of mechanisms, with OpenFlow or not.

This should not come as a surprise to anyone that’s been following OpenFlow, but its a point that I think has gotten lost in all the media attention surrounding SDNs.

One of the things I wanted to learn more about at the Open Networking Summit was what work is being done on these other interfaces? It seems to me that the application interface is much more important to users than the internal protocol to the hardware. Maybe some of the other presentations will address this directly.

I know companies are working on them. Cisco, VMware for sure. BigSwitch Networks has been talking about these kinds of applications for a while now and Nicira is one of the major contributors to OpenStack’s Quantum Network API.

It seems to me that a standard Quantum-like API would be more valuable toward achieving the objectives of a SDN, than OpenFlow.

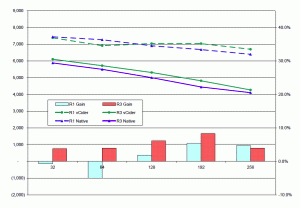

Virtual Networks can run Cassandra up to 60% faster….

In my previous post I described some of the challenges in running a noSQL database like Cassandra on EC2 and how a virtual network could help. Presented here is a performance comparison between running Cassandra on EC2 using the native interfaces vs. interfaces on a private, vCider virtual network.

In my previous post I described some of the challenges in running a noSQL database like Cassandra on EC2 and how a virtual network could help. Presented here is a performance comparison between running Cassandra on EC2 using the native interfaces vs. interfaces on a private, vCider virtual network.

Cassandra performance is generally measured by how fast it can insert into the database new key-value pairs. These pairs become rows in a column and can be any size, although they are typically pretty small, often less than 1K. Database performance is frequently tested with small record sizes to isolate database performance and not the performance of the I/O, disk or network components of the system.

However, in this case we want to isolate the impact of network performance on overall database performance. So we measured performance over a range of network intensive runs until other bottlenecks began to appeared. Our network tests ran with increasing column sizes and replication factors. The larger data size runs began to show disk and I/O bottlenecks when there were more than 256 bytes (with a replication factor of 3) so we varied column widths from 32 up to a maximum 256 bytes.

One important point to note: Database performance is highly application dependent, and users often tune their environment to optimize performance. Our tests are in no way comprehensive, but do illustrate the performance impact of a virtual network on the system within the range of configurations and column sizes actually tested. Obviously, for other situations that are either more or less network bound, your performance may vary.

Cluster Configuration:

We set up a 4-node Cassandra cluster in a single EC2 region. For our baseline measurements we configured it with the Listen, Seed and RPC interfaces all on the node’s private interface. The client was a fifth EC2 instances running a popular Cassandra stress test load generator. The client was running in the same EC2 availability zone.

For the virtual network configurations, we set up the Seed and RPC interfaces on one virtual network, and the Listen interface on a second. This gave us the flexibility to run tests where we could encrypt only the node traffic while letting client traffic remain unencrypted. The interfaces on the virtual network are actually virtual interfaces. so in reality they all use the private interface on the instance as well.

For the tests we chose to run unencrypted, fully encrypted, and node-only encrypted networks. We also ran tests inserting a single copy of the data (R1, replication factor = 1) and again when writing 3 copies (R3, replication factor 3) spread across 3 nodes in the cluster. Since the nodes are responsible for replicating data, running with R3 generates a lot of internode traffic, while with R1, most of the traffic is client traffic.

The summary results for running a 4 node cluster are:

Cassandra Performance on vCider Virtual Network

Replication Factor 1 32 64 128 192 256 byte cols.

v. Unencrypted: 8.2% 0.8% -2.3% -2.3% -6.7%

v. Encrypted: 63.8% 55.4% 60.0% 53.9% 61.7%

v. Node Only Encryption: -0.7% -5.0% 1.9% 5.4% 4.7%

Replication Factor 3 32 64 128 192 256 byte cols

v. Unencrypted: -4.5% -4.7% -5.8% -4.5% -1.5%

v. Encrypted: 31.5% 29.6% 31.4% 27.3% 29.9%

v. Node Only Encryption: 3.8% 3.9% 6.1% 8.3% 4.0%

The complete data and associated charts are available here.

There is tremendous EC2 performance variability and our experiments tried to adjust for that by running 10 trials for each column size and averaging them. Averaged across all column widths, the performance was:

Replication Factor 1

v. Unencrypted: -3.7%

v. Encrypted: +59%

v. Node Only Encryption: +1.3%

Replication Factor 3

v. Unencrypted: -4.2%

v. Encrypted: +30%

v. Node Only Encryption: +5.2%

As you might expect, the performance while running on a virtual network was a little slower than running on the native interfaces.

However, when you encrypt communications (both node and client) the performance of the virtual network was faster by nearly 60% (30% with R3). Since this measurement is primarily an indication of the client encryption performance, we also measured performance of the somewhat unrealistic configuration when only node communications were encrypted. Here the virtual network performed better by between 1.3% and 5.2%.

The overall improvement for the virtual network from -4.2% to -3.7% for unencrypted R3 v. R1 is understandable since R3 is more network intensive than R1. However, since the vCider virtual network performs encryption in the kernel (which seems to be faster than what Cassandra can do natively) when encryption is turned on, the virtual network performance gains are greater with R3 since more data needs to be encrypted.

We expect similar performance characteristics across regions. However, these gains will only be visible when the cluster is configured to hide all of the WAN latency by requiring only local concurrency. The virtual network lets you assign your own private IPs for all Cassandra interfaces so the standard Snitch can be used everywhere as well.

Once we finish these multi-region test, we’ll publish them too. We’ll also put everything in a public repository that includes all Puppet configuration modules as well as the collection of scripts that automate nearly all of the testing described here.

So, netting this out, if you’re running Cassandra in EC2 (or any other public cloud) and want encrypted communications, running on virtual network is a clear winner. Here, not only is it 30-60% faster, but you don’t have to bother with the point-to-point configurations of setting up a third party encryption technique. Since these run in user space, its not surprising that dramatic performance gains can be achieved with the kernel based approach of the virtual network.

If you are running on one of the other popular noSQL databases, similar results may be seen as well. If you have any data on this, we’ll love to hear from you. If you want to try vCider with Cassandra or any other noSQL database you can register for an account at my.vcider.com. Its free!

VEPA Loopback Traffic Will Overwhelm the Network

In my previous post I wrote that under most reasonable assumptions about east-west traffic patterns and virtualization density growth, VEPA loopback traffic could overwhelm your network. Here are some numbers that illustrate the point.

Let’s say you had 24 applications, each requiring 12 servers

for deployment for a total of 288 workloads. Lets also assume that each server required 0.5G of bandwidth for acceptable performance. For simplicity, lets further assume that this 0.5G is distributed uniformly among the 11 other servers required for the application (this is a bad assumption, but we’ll relax it later to show the conclusion remains the same).

We now virtualize this environment in a rack of 12 3U vHosts, each with 4 10G NICs. Each system has 24 cores (6 sockets @ 4 cores/socket) running only one VM per core, for a total of 288 VMs (one for each workload). In this configuration, each NIC supports 6VMs, providing 1.67Gbps (10G/6VMs) per VM of network bandwidth capacity.

So far, all is good. 1.67G capacity per VM when the app only uses 0.5G. This is even more than the 1G capacity that the NICs had when they were running on physical systems before virtualization. But now lets take a look at how much of that traffic gets looped back.

Best case, there could be zero loopback. This would occur when one workload from each app was assigned to one of the 12 available hosts (i.e. app workloads uniformly distributed across hosts). Since all traffic is between workloads running on other hosts, nothing needs to be looped back.

Worst case would be when an entire app is run on a single host and all traffic would have to loop back. Since 24 cores can run 24 workloads, two complete applications can run within a single host. Each workload produces 0.5G of traffic, two complete apps would produce 12Gbps (24 x 0.5G). Looping that back would require a total of 24 Gbps. Spreading that across 4 10GE NICs would consume 24G/40G or 60% of total capacity. Everything still looks fine.

Here the uniform traffic distribution assumption does not matter since even with 100% looped back there is excess network capacity.

Now fast forward a year or two to when virtualization densities grow so that you can run 48 cores on each system and each can run 2 VMs. Instead of 12 vHosts, you now only need 3. Here, with uniform traffic, the best case would assign 4 workloads from each app on each host (again, distributing the workloads uniformly across the hosts). Each of these workloads would need to loopback 3/11 of their traffic totaling 1.1Gbps per app (4 workloads x 3/11 *0.5G total x 2 for loopback). With 24 apps, that would total about 26.2Gbps, just for the loopback traffic.

That’s more than 65% of the peak capacity of the 4 10GE NICs on the system. The rest of the traffic would require 1.45Gbps per app (4 workloads x 8/11 *0.5G total) or 34.9GB. Together this is over 61Gbps or more than 150% of the peak theoretical performance of the NICs! And that’s the best case.

Now lets say you were lucky enough to have a traffic pattern that allowed for the possibility of distributing the VMs so that there was zero traffic looped back. Although highly unlikely, this situation might be possible with a multi-tiered apps where workloads communicated with only a small subset of other workloads and workloads were deliberately placed to reduce loopback traffic. Even then, the total bandwidth necessary per app would be 2Gbps (4 workloads * 0.5G), or 48G total for the host. Still more than 120% of peak capacity.

Of course you can add more servers and/or more NICs to get this all to work, but that reduces virtualization density and increases the required number of switch ports. To get back to the numbers of the first example you would need to quadruple the number of NICs, negating all the benefit of higher virtualization densities on the network.

We’re very pleased to have been selected for the GigaOm Structure Launchpad Event, where we’ll be one of a handful of start-ups introducing new solutions for the cloud. What’s our solution?

We’re building the industry’s first on-demand multi-layer distributed virtual switch for the cloud. Using our switch, you will be able to connect all of your systems, wherever they may be located, in a single layer 2 broadcast domain. This gives the network-address control and security you’re used to having in your data center, but now in a cloud or hybrid infrastructure.

Today, cloud providers don’t offer layer 2 connectivity among their systems. Worse yet, even if they did, you wouldn’t be able to extend your internal LAN out to the cloud without all sorts of complicated gateways and NAT devices along the way. And if you wanted to just connect systems between IaaS providers, you’re pretty much dead in the water.

Secure Virtual Network Gateway for Hybrid Clouds

Secure access to your vCider VPC is provided through a Virtual Network Gateway.

A Virtual Network Gateway is an on-premises system that has been added to a virtual network. The gateway system must also be on the local enterprise network that requires access to the VPC.

Setting up a virtual network gateway is fast and easy.

vCider software automatically detects which physical networks are accessible from each system on the virtual network. The vCider Management Console presents a list of all these potential network connections. Through the console, the user then select the system (or systems) to be configured as a gateway.

Once the gateway is specified, that system is configured to route packets from the secure encrypted external virtual network on to the physical network it is connected to.

vCider then automatically configures all the other systems on the virtual network with routes that specify the gateway system as the path to the enterprise LAN.

Internal to the enterprise, the firewall must be configured to enable access to the appropriate networks and a route must be specified.

Moving to the cloud? Virtual networks keep you in control

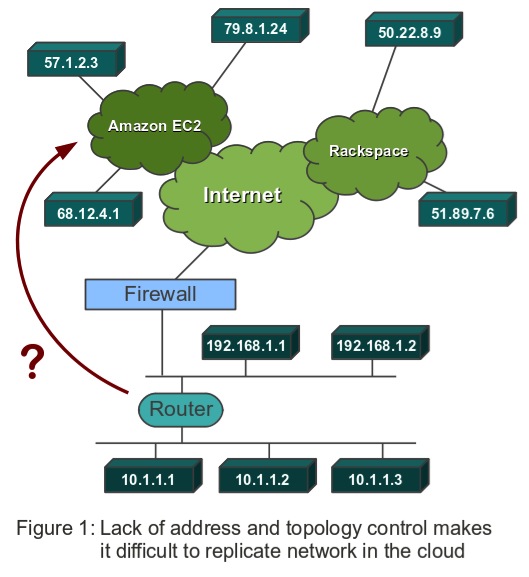

Multi-tier applications, cluster setups, failover configurations. These are all daily concerns for any server or network administrator responsible for deploying and maintaining non-trivial projects. Yet, it is exactly these things, which are greatly complicated when you consider moving your applications to cloud-based IaaS providers, such as Amazon EC2. It is somewhat ironic that in those heavily virtualized environments one of the most important types of virtualization is largely missing: The virtualization of the network topology. Since we at vCider provide solutions for the creation of virtualized networks, I want to take a moment and talk about why you need the ability to create your own virtual network topologies and how this can greatly simplify your life when you are moving into the cloud.

In the cloud, you have no control over the network

There are two fundamental problems. First, there is the simple fact that you don’t have any control over the IP address assignment when you start a machine instance on Amazon or Rackspace. You don’t control the network or how the IaaS provider is using it. Second, because you don’t control the network, you also cannot set up your own layer 2 broadcast domains either. Let’s take the example of simply wanting to setup a duplicate or clone of your on-premise network, maybe for redundancy and availability. The clone should be an exact replica of your original network, if possible.

This is difficult for a number of reasons. Let’s start with the IP addresses, over which you normally do not have any control in most IaaS environments. Some allow you to fix your public addresses (such as Amazon’s “elastic IP addresses”), but realistically there are usually only very few public ‘entry points’ to your site. Most of your site’s or network’s internal traffic will use internal IP addresses. Your front-end server uses an internal, private address to connect to your application server, your application server uses an internal address to connect to your database server, and so on. You want to use internal addresses for communication wherever possible: In most IaaS environments it is faster, cheaper and possibly also more secure to do so. But you can’t just configure those addresses in your web-server, application server or database configuration files, since whenever a machine reboots, its addresses are randomly chosen for you – most likely not even from the same subnet. People try to work around this by using dynamic DNS, with each node having to register itself first, but then of course you are adding yet another moving piece to the puzzle, further complicating your architecture.

This point about subnets leads us to the second issue: Your inability to create layer 2 broadcast domains. If you had your own data center, you would control the network. You would deploy switches to create layer 2 networks, and define specific routing rules for traffic between those layer 2 networks. In other words: There you can create a network topology of your choice. But in the cloud, you have lost this ability. For the most part, everything has to be routed, you need to live with whatever topology the IaaS provider offers you.

Why is it important to control your network?

But why is that a problem? Or to turn the question around: Why do you need or want the ability to assign your own addresses or create your own layer 2 networks? There are several good reasons.

Simplified configuration

If you control IP address assignment, it greatly simplifies configuration of your site. For example, you can just refer to you application or database servers by well-known, static yet private IP addresses, which may appear in various configuration files or scripts. In fact, with a reliable IP address assignment you can simply maintain a single, static /etc/hosts file for many of your servers. But as mentioned earlier, the dynamic addresses of Amazon EC2 instances will change with every reboot. So, what address do you write down in your configuration files? As mentioned, dynamic DNS is sometimes proposed as a solution here, or even on-the-fly re-writing of server configuration files, but who wants to deal with such an unnecessary complication?

Virtual network topologies for performance and security

If you control the network topology, you can create broadcast domains as a means to architect and segment your site, with controlled routing and security between your layer 2 networks. Being able to create broadcast domains therefore is a means to secure the tiers of your site, to keep broadcast traffic at manageable levels, to reflect your site’s architecture in the underlying network topology and of course to deploy cluster software that relies on the presence of a layer 2 network. Without this ability, you are restricted to the use of security groups (on Amazon) to secure different sections of the site, which are a good start but which of course are not a 100% replacement for stateful firewalls that could be deployed as routers between actual layer 2 networks. And naturally, you still don’t get all the other benefits of actual layer 2 networks.

Ability to move your network to different clouds

Different IaaS providers offer different means and capabilities for securing a network, expressing the architecture and topology, configuring load balancing and so on. Therefore, it is difficult to take your architecture and move it to a different data center, or even a different provider. What if you would like to spread your site across two data centers, one operated by Amazon, the other by Rackspace, for maximum availability? You would have to replicate the setup you developed for one provider and then adapt it to the changed network topology of the other. What if instead you could take the entire network topology and transfer and replicate it – exactly as is – to the other data center, including the IP addresses, broadcast domains, configured routing between tiers and all? This would offer you true mobility across clouds: You would be able to avoid a great deal of provider lock-in and gain true mobility for your entire site: All you need to define your entire network architecture in one handy package. Some efforts are under way to develop standards for this: As part of the OpenStack project there is an initiative, called ‘Network Containers’, which is concerned exactly with this, but those standards are well in their infancy at this point.

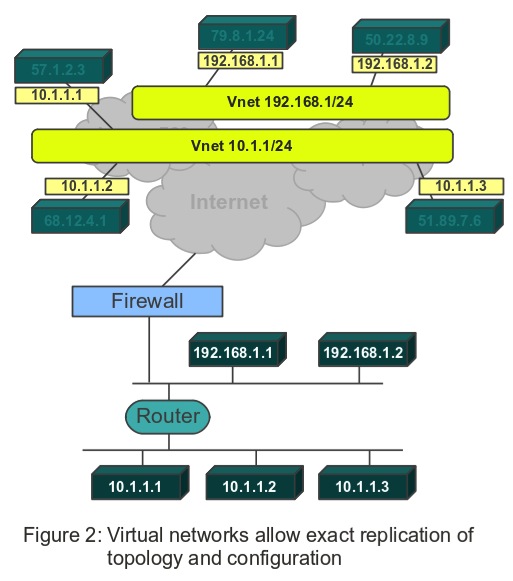

Virtual networks give you control over your network topology

So, users of IaaS offerings are forced to deal with a network infrastructure and topology over which they do not have control. This mandated topology may not match your site’s architecture very well. Furthermore, if we already have existing site configurations in more traditional hosting or network environments, we will be hard pressed to move them quickly and seamlessly into the cloud. This is exactly where virtual networks come into play. What is a virtual network? It is a network over which you have control, running on top of a network over which you do not have control.

With virtual networks you control the IP address assignment of your nodes, you can create (virtual) layer 2 switches, even in otherwise fully routed cloud environments. Your switches may even span data centers or providers, or may stretch from your on-premise systems to cloud-based systems. Those virtual layer 2 switches can do everything a real switch can do, including broadcast or running non IP protocols. You can also set up routers and firewalls between your broadcast domains (subnets) exactly the way you wish. There are of course different ways to create virtual networks. Here at vCider we believe we have a particularly user-friendly approach.

High Performance Computing Deployments in a Secure Virtual Private Cloud

Securing Scalable Cloud Services for Computational Chemistry and Pharma Research

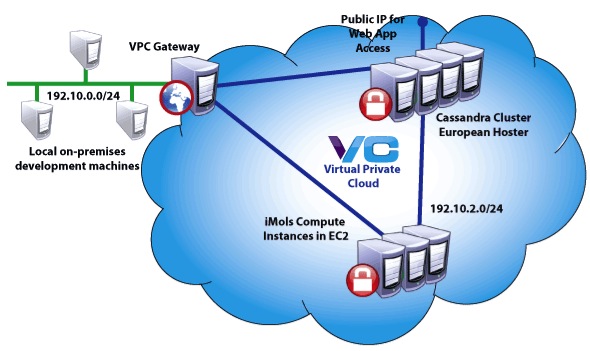

Mind the Byte is a Barcelona-based cloud application provider, offering computational services, applications, and data sets to biochemistry researchers in universities and research institutes, including researchers for the pharmaceutical industry. The company offers a cloud service called iMols, which compiles molecules, proteins and activities databases, becoming an in silico laboratory for storing chemogenomics data, building data sets, and sharing data and ideas with fellow researchers. The company also offers general consulting and computational services for researchers.

While simplifying computational chemistry for its customers, Mind the Byte faces its own challenges in the area of cloud security and management. The company stores vast quantities of biochemical data in Amazon S3. It accesses and processes this data via iMols running on EC2 instances in Amazon’s Virginia data center. iMols runs standard computations using the Chemistry Development Kit Java library (CDK), as well as Mind the Byte’s own proprietary calculations and analysis. Mind the Byte loads computations into Cassandra for fast, efficient interactive queries. For performance, cost, and security reasons, Cassandra instances and the iMols front end run in a data center in the Netherlands, close to Mind the Byte’s European customers. Mind the Byte manages operations from their in Barcelona.

The company needed a fast, efficient way to connect all these resources in a secure network that would be easy to manage.

The Solution: a vCider Virtual Private Cloud

Mind the Byte implemented a vCider Virtual Private Cloud to create a fast, secure private network for its cloud services and data. vCider’s Virtual Private Cloud (VPC) service enables cloud application developers to configure secure private networks that span data centers and cloud service providers.

Results: Fast, Secure Cloud Networking and More Time for Development

“Data security is vital for our customers, especially our Pharma customers. With vCider, we know that our cloud resources are safe.”

— Dr. Alfons Nonell-Canals, Mind the Byte

- • A fast, efficient, and secure cloud network spanning cloud service providers on different continents

- • Security for Pharma data and other biochemical research

- • Flexibility to add and remove nodes through an easy-to-use management console

- • Faster performance for Cassandra data stores

- • Graphical reporting on network health through the vCider management console

- • More time for chemistry research and customer management, now that cloud management has been simplified

SDN Factoid offered for your consideration…

Saw the other day that Infoblox was getting closer to their IPO and had set a price range for their offering. This got me to looking into their S-1 to see how they are doing.

Turns out that they are doing quite well. Revenue up to more than a $160M/yr run rate and growing nicely. If you’re not familiar with Infoblox, they have a DNS appliance as well as a number of other network configuration management products. In their S-1 they say:

We are a leader in automated network control and provide an appliance-based solution that enables dynamic networks and next-generation data centers. Our solution combines real-time IP address management with the automation of key network control and network change and configuration management processes in purpose-built physical and virtual appliances. It is based on our proprietary software that is highly scalable and automates vital network functions, such as IP address management, device configuration, compliance, network discovery, policy implementation, security and monitoring. Our solution enables our end customers to create dynamic networks, address burgeoning growth in the number of network-connected devices and applications, manage complex networks efficiently and capture more fully the value from virtualization and cloud computing.

Sounds a lot like Software Defined Networking? Right?

I think by any definition what Infoblox provides is a vital aspect of SDN. Pretty sure they’re aware of this too since they are a member of the Open Networking Foundation. This is a pretty big commitment since membership requires a $30,000 annual fee.

So, you’d think that SDN, Software Defined Networking, ONF and/or Open Networking Foundation would be promentent in the offering documents, right?

A quick search of the these terms in their S-1 reveals the following tally:

- Software Defined Networking: zero

- SDN: zero

- Open Networking Foundation: zero

- ONF: zero

SaaS Applications Running in a Secure Virtual Private Cloud

Securing Multi-Provider SaaS Services for a Reservation and Ticketing Company

“Better reservations” is the goal of Betterez, a SaaS provider that helps passenger transport operators to increase revenues and improve customer service. The Betterez solution includes a full-featured reservations and ticketing engine that permits direct sales via websites, Facebook, and a back-office Web application, as well as intelligence and analytics that help operators market more effectively and operate more efficiently.

The Betterez service runs in the cloud and relies on proven high performance platforms such as Node.js and MongoDB. To ensure that transport operators and passengers have continuous access to critical ticketing and operations systems, Betterez has implemented a fully redundant and scalable service. They have taken a multi-data-center approach that spans two cloud providers: Amazon AWS and Rackspace Cloud.

The company chose Rackspace as its cloud provider for MongoDB databases. They concluded that Rackspace’s disk performance was more reliable and better suited for their purposes. Rackspace also provides better support, which is important for Betterez’s mission-critical needs. All other services run in various Amazon AWS regions. Betterez needed secure communications between all its servers as well as way to support dynamic scaling of services based on changing demand.

The Solution: vCider Virtual Private Cloud

Betterez decided to implement a vCider Virtual Private Cloud to create a fast, secure and scalable private network for its cloud infrastructure.

Results: Fast, Efficient Secure Networking and Reduced Operational Overhead

“vCider is the glue that keeps all servers and services communicating securely and in well-organized subnets, regardless of their data center location. Accomplishing this without vCider would be a difficult and tedious challenge. vCider saves us time and lets us focus on what we do best.”

—Mani Fazeli, co-founder

- • A fast, efficient, and secure cloud network spanning data centers at both Amazon and Rackspace

- • Flexibility to add and remove nodes through an easy-to-use management console

- • Encrypted communication between MongoDB instances in the multi-data-center replica set

- • Graphical reporting on network health through the vCider management console

- • Reduced operational overhead and demand on system engineers

Open Networking Foundation and the Promise of Inter-Controllable SDNs

Big news in the networking world this week with the announcement of the Open Networking Foundation (ONF).

The Open Networking Foundation is a nonprofit organization dedicated to promoting a new approach to networking called Software-Defined Networking (SDN). SDN allows owners and operators of networks to control and manage their networks to best serve their needs. ONF’s first priority is to develop and use the OpenFlow protocol. Through simplified hardware and network management, OpenFlow seeks to increase network functionality while lowering the cost associated with operating networks.

This is an important and ambitious goal. The news got widespread coverage in the trade press (here, here, here and here) as well as a nice write up in the NYT.

There aren’t a lot of detail on how this is all going to work, other than OpenFlow will be the foundation of the approach. Recall that OpenFlow is an approach that separates the control plane from the data plane in network equipment, enabling controllers to manipulate the forwarding functions of the device. Once separate, the hope is that smarter, cheaper networks will be possible since fast, inexpensive forwarding engines can then be controlled by external software.

Its the external control of networks that I find so exciting. OpenFlow is just one technique for Software Defined Networks (SDN) that have the potential to revolutionize the way networks are build and managed. Clearly, virtual networks (VNs) are SDNs.

The vision of ONF is not only that the networks be interoperable, but that they also be inter-controllable. I remember Interop back in the late ’90s where the plugfests were the highlights of the conference. We take that for granted now, but there was a time when it wasn’t uncommon for one vendor’s router to be unable to route another vendor’s packets.

I guess we can start looking forward to control-fests.

The participation of all the major vendors clearly indicates the importance of SDNs. Cisco, Brocade, Broadcom, Ciena, Juniper and Marvell are all on board. I like that large network operators including Google, Facebook, Yahoo, Microsoft, Verizon and Deutsche Telekom make up the board, and not the vendors. This tells me that direction will be set by what users want.

Although I have to admit that I remain doubtful that we we’ll be running inter-controllable OpenFlow-enabled devices any time soon. Anyone that remembers theUnix Wars knows that big companies can’t agree on very much when it comes to their competitive advantage. Heck, they couldn’t even agree on byte order back then.

The unfortunate reality is that each of these vendors can support OpenFlow 100%, while at the same time pursue their own independent SDN strategy, which could result in networks that are no more inter-controllable than they are today.

Great article. Thanks for linking to my VLAN article.

Thanks George, you are very welcome.

Good day! I just wish to give an enormous thumbs up for the nice data you may have here on this post.

I will probably be coming again to your weblog for more soon.

I love to share information that I’ve accrued through the yr to assist improve group performance.