[fancy_header3 variation=”orange”] Setting Up Overlays on Open vSwitch [/fancy_header3]

Most “SDN” solutions involve overlays or at the least HW overlay gateways/ToR of some type. Some sell overlays terminating in hardware, others sell overlays terminating in the server. The encaps include standards like GRE, VXLAN and soon to be Geneve (Generic Network Virtualization Encapsulation: basically the good parts of the other encaps evolved). While none of these overlay networks should ever be setup by hand like we provision networks currently today, if you are trying to get a good understanding of OVS and overlays for proofing something before you code it this may be helpful. Before we do any coding to automate provisioning, it helps to work out any potential blocking ahead of time. OVS is the reference SDN datapath from developers who I regard as the best in the business. If you invest time into evolving your network, OVS is the place to start.

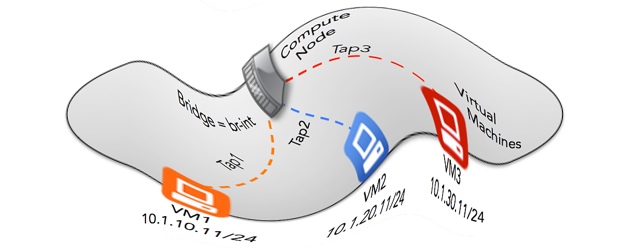

Rather then type out a bunch of details, I did a detailed drawing of how things can look w/ example addresses. This isn’t necessarily a good beginner how-to for OpenFlow, Open vSwitch etc, but if you are looking to understand how to decouple the physical constraints of current generation networks, OVS and overlays are the only rational architecture that is actually implementable in the brownfield data center today. These are things I think you need to understand cold if you are developing or operating (or both) overlay solutions. For architecture, understanding the picture and not the implementation details is probably helpful if dealing with OpenStack in particular. A decent OpenStack integration will abstract the details but understanding them is going to be important to those working on cloud infrstructures.

[fancy_header3 variation=”orange”] Setting Up Overlays on Open vSwitch Tutorial [/fancy_header3]

This is for three Hypervisors running nested Hypervisors. Basically take a box, install KVM and boot three hosts on it. Then grab a small linux distribution like Cirros. Libvirt is handy for this but too low level to mix into this overview. Google for it, lots of documentation out there for it.

The topology will look something like the following:

[image_frame style=”framed_shadow” align=”center” alt=”SDN Overlays”]http://networkstatic.net/wp-content/uploads/2014/03/Overlays-Blog-650×494.jpeg[/image_frame]

I have different subnets to emphasize the flexibility overlays present. You don’t need to add physical eth0 interfaces into a bridge for this. your TEP only needs to use any address on the Linux host that can reach the destination tunnel endpoint. Adding Ethernet NICs to the bridge is necessary if you are setting up a gateway host to redistribute overlay traffic into another non-overlay network.

I highly recommend only doing the configuration portion if you are new to flow forwarding and OpenFlow unless you learn through troubleshooting and are willing to work through issues which is awesome and a great way to learn. Google is your friend, I know it is mine. If you do configuration (OVSDB) only change key=flow to key=10 or some number. key=flow means the OpenFlow v1.3 flowmod will set the tun_id using the OXM extension. set_tunnel_id is as advanced as OpenFlow gets on the tunnel side which is a downside and why we are currently implementing NXM extensions in ODL. This is another good example why many of us feel there needs to be a separate software OF spec for those of us who desire to move faster then the silicon foundries. This is a totally proactive setup, meaning it can have or not have a controller. You can use (or script) client commands to implement the scenario which is actually what the OpenStack OVS plugin does. Alternatively you can use open source controllers such as OpenDaylight and RYU or commercial controller from Vmware (NSX).

The example will setup a full mesh of tunnels, attach a VM to br0 and all VMs on that segment should ping. I recommend Open vSwitch 2.0 or higher to ensure feature availability w/vxlan etc. Also if you are new to Open vSwitch or Linux networking I recommend just installing and tinkering around with merely adding bridges, ports and how hypervisors integrate into OVS, as tunnels will be a bit confusing to start with.

[fancy_link link=” Install Open vSwitch v2 from Source on Red Hat Fedora 19″ variation=”red” textColor=”#fcfcfc” target=”blank”]http://networkstatic.net/install-open-vswitch-networking-red-hat-fedora-20/[/fancy_link]

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 |

Host1: ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=flow options:local_ip=192.168.1.180 options:remote_ip=192.168.1.181 ofport_request=10 ovs-vsctl add-port br0 vxlan1 -- set Interface vxlan1 type=vxlan options:key=flow options:local_ip=192.168.1.180 options:remote_ip=192.168.1.182 ofport_request=11 Host2: ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=10 options:local_ip=192.168.1.183 options:remote_ip=192.168.1.182 ofport_request=10 ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=10 options:local_ip=192.168.1.182 options:remote_ip=192.168.1.183 ofport_request=10 Host3: ovs-vsctl add-port lib vxlan -- set Interface vxlan type=vxlan options:key=flow options:local_ip=flow options:remote_ip=flow ofport_request=10 ovs-vsctl add-port lib vxlan1 -- set Interface vxlan1 type=vxlan options:key=flow options:local_ip=192.168.1.182 options:remote_ip=192.168.1.180 ofport_request=11 To test with a ping, the easiest way is to find a way to get a VM spun up. You can use namespaces and what not also to get an L3 interface for testing. Find MAC Addresses w/a normal rule and looking at the fdb table when you have VMs running and in an OVS bridge with a tap interface: [crayon-6a1e11ec94509202166859 lang="bash" ] sudo ovs-ofctl -O OpenFlow13 add-flow br0 " priority=0, actions=normal" sudo ovs-appctl fdb/show =========== For the Example, MAC Addresses are as follows =========== TEP1-192.168.1.180 ------------------ port VLAN MAC Age 2 0 00:00:00:00:00:01 11 TEP2-192.168.1.181 ------------------ port VLAN MAC Age 2 0 00:00:00:00:00:05 3 1 0 00:00:00:00:00:04 3 TEP3-192.168.1.182 ------------------ port VLAN MAC Age 1 0 00:00:00:00:00:08 24 |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 |

================== Host 192.168.1.180 ------------------ ovs-vsctl add-br br0 ovs-vsctl set bridge br0 protocols=OpenFlow13 ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=flow options:local_ip=192.168.1.180 options:remote_ip=192.168.1.181 ofport_request=10 ovs-vsctl add-port br0 vxlan1 -- set Interface vxlan1 type=vxlan options:key=flow options:local_ip=192.168.1.180 options:remote_ip=192.168.1.182 ofport_request=11 ------------ Add OpenFlow Flowmods using 3 tables, Classifier, Ingress, Egress. That is an implementation choice not a requirement. ------------ ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=10, actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=11 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,in_port=2,dl_src=00:00:00:00:00:01 actions=set_field:5->tun_id,goto_table=1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,priority=16384,in_port=1 actions=drop" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:08 actions=output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:04 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:05 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:10,output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=8192,tun_id=0x5 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,tun_id=0x5,dl_dst=00:00:00:00:00:01 actions=output:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=8192,tun_id=0x5 actions=drop" |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

================== Host 192.168.1.181 ------------------ ovs-vsctl add-br br0 ovs-vsctl set bridge br0 protocols=OpenFlow13 ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=flow options:local_ip=192.168.1.181 options:remote_ip=192.168.1.182 ofport_request=10 ovs-vsctl add-port br0 vxlan1 -- set Interface vxlan1 type=vxlan options:key=flow options:local_ip=192.168.1.181 options:remote_ip=192.168.1.180 ofport_request=11 ------------ Add OpenFlow Flowmods using 3 tables, Classifier, Ingress, Egress. That is an implementation choice not a requirement. ------------ ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=10 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=11 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,in_port=1,dl_src=00:00:00:00:00:04 actions=set_field:5->tun_id,goto_table=1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,in_port=2,dl_src=00:00:00:00:00:05 actions=set_field:5->tun_id,goto_table=1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,priority=16384,in_port=1 actions=drop" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,priority=16384,in_port=2 actions=drop" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:08 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:02 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:01 actions=output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:10,output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=8192,tun_id=0x5 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,tun_id=0x5,dl_dst=00:00:00:00:00:04 actions=output:1 VM1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,tun_id=0x5,dl_dst=00:00:00:00:00:05 actions=output:2 VM2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:1,output:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=8192,tun_id=0x5 actions=drop" |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

================== Host 192.168.1.182 ------------------ ovs-vsctl add-br br0 ovs-vsctl set bridge br0 protocols=OpenFlow13 ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=10 options:local_ip=192.168.1.183 options:remote_ip=192.168.1.182 ofport_request=10 ovs-vsctl add-port br0 vxlan -- set Interface vxlan type=vxlan options:key=10 options:local_ip=192.168.1.182 options:remote_ip=192.168.1.183 ofport_request=10 ------------ Add OpenFlow Flowmods using 3 tables, Classifier, Ingress, Egress. That is an implementation choice not a requirement. ------------ ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=10 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,tun_id=0x5,in_port=11 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,in_port=1,dl_src=00:00:00:00:00:08 actions=set_field:5->tun_id,goto_table=1,tun_dst:ip:1.1.1.1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=0,priority=16384,in_port=1 actions=drop" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:01 actions=output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:02 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:04 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,tun_id=0x5,dl_dst=00:00:00:00:00:05 actions=output:10,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:10,output:11,goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=1,priority=8192,tun_id=0x5 actions=goto_table:2" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,tun_id=0x5,dl_dst=00:00:00:00:00:08 actions=output:1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:1" ovs-ofctl add-flow -O OpenFlow13 br0 "table=2,priority=8192,tun_id=0x5 actions=drop" |

Verify your flows are installed and counters are incrementing if you have a guest VM attached to a port. An example from an OpenStack deloyment I had running locally in a VM while writing this is:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

$ sudo ovs-ofctl -O OpenFlow13 dump-flows br-int OFPST_FLOW reply (OF1.3) (xid=0x2): cookie=0x0, duration=51452.798s, table=0, n_packets=54499, n_bytes=5752542, tun_id=0x5,in_port=10 actions=goto_table:2 cookie=0x0, duration=51452.787s, table=0, n_packets=52544, n_bytes=5060832, in_port=1,dl_src=00:00:00:00:00:08 actions=set_field:0x5->tun_id,goto_table:1 cookie=0x0, duration=51452.781s, table=0, n_packets=0, n_bytes=0, priority=16384,in_port=1 actions=drop cookie=0x0, duration=51452.757s, table=1, n_packets=0, n_bytes=0, priority=8192,tun_id=0x5 actions=goto_table:2 cookie=0x0, duration=51452.773s, table=1, n_packets=52469, n_bytes=5057682, tun_id=0x5,dl_dst=00:00:00:00:00:01 actions=output:10,goto_table:2 cookie=0x0, duration=51452.764s, table=1, n_packets=75, n_bytes=3150, priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:10,goto_table:2 cookie=0x0, duration=51452.735s, table=2, n_packets=52469, n_bytes=5057682, priority=8192,tun_id=0x5 actions=drop cookie=0x0, duration=51452.75s, table=2, n_packets=52466, n_bytes=5057556, tun_id=0x5,dl_dst=00:00:00:00:00:08 actions=output:1 cookie=0x0, duration=51452.742s, table=2, n_packets=2108, n_bytes=698136, priority=16384,tun_id=0x5,dl_dst=ff:ff:ff:ff:ff:ff actions=output:1 |

Here are some helpful aliases/commands that I use for client commands.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

### OVS Aliases ### alias novh='nova hypervisor-list' alias novm='nova-manage service list' alias ovstart='sudo /usr/share/openvswitch/scripts/ovs-ctl start' alias ovs='sudo ovs-vsctl show' alias ovsd='sudo ovsdb-client dump' alias ovsp='sudo ovs-dpctl show' alias ovsf='sudo ovs-ofctl ' alias logs="sudo journalctl -n 300 --no-pager" alias ologs="tail -n 300 /var/log/openvswitch/ovs-vswitchd.log" alias vsh="sudo virsh list" alias ovap="sudo ovs-appctl fdb/show " alias ovapd="sudo ovs-appctl bridge/dump-flows " alias dpfl=" sudo ovs-dpctl dump-flows " alias ovtun="sudo ovs-ofctl dump-flows br-tun" alias ovint="sudo ovs-ofctl dump-flows br-int" alias ovap="sudo ovs-appctl fdb/show " alias ovapd="sudo ovs-appctl bridge/dump-flows " alias ovl="sudo ovs-ofctl dump-flows br-int" alias dfl="sudo ovs-ofctl -O OpenFlow13 del-flows " alias ovls="sudo ovs-ofctl -O OpenFlow13 dump-flows br-int" alias dpfl="sudo ovs-dpctl dump-flows " alias ofport=" sudo ovs-ofctl -O OpenFlow13 dump-ports br-int" alias del=" sudo ovs-ofctl -O OpenFlow13 del-flows " alias lsof6='lsof -P -iTCP -sTCP:LISTEN | grep 66' alias vsh="sudo virsh list" alias ns="sudo ip netns exec " |

To setup overlays using OpenStack, OpenDaylight, Open vSwitch, OpenFlow, OVSDB, lol thats a lot of Opens, see the following:

[fancy_link link=”OpenDaylight OpenStack Integration with DevStack on Fedora” variation=”red” target=”blank”]http://networkstatic.net/opendaylight-openstack-integration-devstack-fedora-20/[/fancy_link]

For more regarding OVS and flow forwarding using OpenFlow and/or OpenFlow/Nicira extensions take a look at Ben Pfaff’s latest draft of OpenFlow/OVS fields in Open vSwitch. This is what in my opinion OpenFlow should look like in order to facilitate overlays with open standards in the Data Center. Don’t confuse overlays with hypervisor networking only. By far the majority of SDN data center implementations use overlays regardless of whether the TEP terminates on the soft vSwitch or the ToR physical switch. Cisco ACI for example setup TEPs in their leaf HW nodes using some sort of signaling which I have no idea about beyond overlays and a few bits in the VXLAN header to be keyed on for application classification.

I am a developer on the OpenDaylight projejct and we managed to get basic overlay functionality implemented but we have certainly hit the wall on adding functionality without using NXM extensions. Long story shot, if the OpenFlow specification is to stay relevant (which Dan Pitt and company work hard to do so) it needs to address these functions in the spec rather then in OF-Config only. For example, IPv4 Tunnel Src/Dest is a very obvious one. The current ONF answer would be use OF-Config, in OpenDaylight we solved it using the awesomeness of the OVSDB protocol (RFC 7047) from Pfaff and Davie.

For for those in networking digging under the covers of SDN or developers implementing SDN solutions, I think its important to weigh in on this topic. Some incumbents would be fine with an outcome of SDN to be so fragmented that it resembles SNMP MIBs with little overall impact/disruption. I for one will use whatever protocol required as long as it is open and more important supported by the data plane. It would be nice if it is an agreed upon “standard” but that doesnt mean I need a committee of people not engaged with community. A community defacto can also be a standard and often a much more meaningful standard at that. Take a look at the document, Ben has added a ton of details that have only come from experience of being on the forefront of SDN from his leadership and development in the Open vSwitch project.

[fancy_link link=”http://benpfaff.org/~blp/ovs-fields.7.pdf” variation=”orange” target=”blank”]OpenFlow and Open vSwitch Fields 2nd Draft via benpfaff.orf[/fancy_link]

Cya!

Brent,

Thanks for the post regarding this setup. I read it and it’s Greek to me, where do I go to learn more about the basics of this? Thanks!

Vic – openvswitch.org should help. You can also subscribe/read the mailing list there. For the flow entries Brent is showing http://openvswitch.org/cgi-bin/ovsman.cgi?page=utilities/ovs-ofctl.8 For commands related to adding/configuring OVS bridges http://openvswitch.org/cgi-bin/ovsman.cgi?page=utilities/ovs-vsctl.8. There is also a tutorial under documentation at openvswitch.org

Brent – great post. The quote below was an ‘aha’ moment for me on some confusion I had about where TEPs need to be.

“You don’t need to add physical eth0 interfaces into a bridge for this. your TEP only needs to use any address on the Linux host that can reach the destination tunnel endpoint.”

Hi David, That is a great point! The tunnels are stateless which is also handy very much like something else we know, good old MPLS 🙂 When I originally started working w/OVS it was prior to OpenStack so I was more in the traditional mindset which involved tying it to the “normal” network to VLANs etc. In that case adding a NIC iface like eth0 (ovs-vsctl add-port br0 eth0) made sense. If you look at br-ex in an OpenStack environment, that is all it is doing, br-int w/ flowmods facing the overlay and br-ex w/ a normal OF rule to flood and learn.

There are also hidden flows with a high priority for in-band OF controller / OVSDB manager connectivity. Ben Pfaff talks about this in the FAQ which he puts a bunch of time in documenting for the community. Great read if you get a second, http://goo.gl/EcwKAx

Here is the output w/ hidden flows from an OpenStack controller node:

$ sudo ovs-appctl bridge/dump-flows br-int

duration=71391s, priority=180008, n_packets=0, n_bytes=0, priority=180008,tcp,nw_src=172.16.86.1,tp_src=6640,actions=NORMAL

duration=71391s, priority=180008, n_packets=0, n_bytes=0, priority=180008,tcp,nw_src=172.16.86.1,tp_src=6633,actions=NORMAL

duration=71391s, priority=180000, n_packets=0, n_bytes=0, priority=180000,udp,in_port=LOCAL,dl_src=72:d8:e9:6e:5c:43,tp_src=68,tp_dst=67,actions=NORMAL

duration=71391s, priority=32768, n_packets=0, n_bytes=0, in_port=1,dl_src=fa:16:3e:97:75:49,actions=set_field:0x1->tun_id,goto_table:10

duration=71391s, priority=32768, n_packets=0, n_bytes=0, in_port=1,dl_src=fa:16:3e:ea:82:de,actions=set_field:0x2->tun_id,goto_table:10

duration=71391s, priority=180006, n_packets=0, n_bytes=0, priority=180006,arp,arp_spa=172.16.86.1,arp_op=1,actions=NORMAL

duration=71391s, priority=8192, n_packets=0, n_bytes=0, priority=8192,in_port=1,actions=drop

duration=2654s, priority=32768, n_packets=0, n_bytes=0, dl_type=0x88cc,actions=CONTROLLER:56

duration=71391s, priority=180002, n_packets=0, n_bytes=0, priority=180002,arp,dl_src=72:d8:e9:6e:5c:43,arp_op=1,actions=NORMAL

duration=71391s, priority=180004, n_packets=0, n_bytes=0, priority=180004,arp,dl_src=00:50:56:f0:56:cd,arp_op=1,actions=NORMAL

duration=71391s, priority=180001, n_packets=0, n_bytes=0, priority=180001,arp,dl_dst=72:d8:e9:6e:5c:43,arp_op=2,actions=NORMAL

duration=71391s, priority=180003, n_packets=0, n_bytes=0, priority=180003,arp,dl_dst=00:50:56:f0:56:cd,arp_op=2,actions=NORMAL

duration=71391s, priority=180005, n_packets=0, n_bytes=0, priority=180005,arp,arp_tpa=172.16.86.1,arp_op=2,actions=NORMAL

duration=71391s, priority=180007, n_packets=0, n_bytes=0, priority=180007,tcp,nw_dst=172.16.86.1,tp_dst=6640,actions=NORMAL

duration=71391s, priority=180007, n_packets=0, n_bytes=0, priority=180007,tcp,nw_dst=172.16.86.1,tp_dst=6633,actions=NORMAL

table_id=10, duration=71391s, priority=8192, n_packets=0, n_bytes=0, priority=8192,tun_id=0x1,actions=goto_table:20

table_id=10, duration=71391s, priority=8192, n_packets=0, n_bytes=0, priority=8192,tun_id=0x2,actions=goto_table:20

table_id=20, duration=71391s, priority=8192, n_packets=0, n_bytes=0, priority=8192,tun_id=0x1,actions=drop

table_id=20, duration=71391s, priority=8192, n_packets=0, n_bytes=0, priority=8192,tun_id=0x2,actions=drop

table_id=20, duration=71391s, priority=16384, n_packets=0, n_bytes=0, priority=16384,tun_id=0x1,dl_dst=01:00:00:00:00:00/01:00:00:00:00:00,actions=output:1

table_id=20, duration=71391s, priority=16384, n_packets=0, n_bytes=0, priority=16384,tun_id=0x2,dl_dst=01:00:00:00:00:00/01:00:00:00:00:00,actions=output:1

table_id=20, duration=71391s, priority=32768, n_packets=0, n_bytes=0, tun_id=0x2,dl_dst=fa:16:3e:ea:82:de,actions=output:1

table_id=20, duration=71391s, priority=32768, n_packets=0, n_bytes=0, tun_id=0x1,dl_dst=fa:16:3e:97:75:49,actions=output:1

table_id=254, duration=71457s, priority=0, n_packets=0, n_bytes=0, priority=0,reg0=0x3,actions=drop

table_id=254, duration=71457s, priority=0, n_packets=17, n_bytes=2082, priority=0,reg0=0x1,actions=controller(reason=no_match)

table_id=254, duration=71457s, priority=0, n_packets=0, n_bytes=0, priority=0,reg0=0x2,actions=drop

Thanks for the comments and assistance. You made a great breakthrough! That was one when I finally realized the overlay had the same Aha moment!

Respect,

-Brent

Hi Vic, I would start out just getting a solid understanding of Hypervisors and switching traffic for VMs. Just starting out with Virtual Box ( https://www.virtualbox.org/wiki/Downloads ) and installing Fedora and Open vSwitch will teach you a ton about Linux and OVS which is the perfect place to start in my opinion. Your networking background will shine when working on these Linux tools when compared to the systems admin/engineer brethren.

Cheers and thanks for the feedback!

-Brent

Oh yeah. Well I’ll setup ClosedStack, ClosedNightlight, ClosedpSwitch, ClosedFlow, and CVSDB, and kick your butt with a lot of closed stuff. Kidding of course. Great stuff Brent.

Haha, thanks Dave!! You the man! Added a bit to the end of the post, curious on your thoughts bro.

Hello Brent, Thank you for your interesting post

I can not see anything from the last sentence included reference links. Please fix it

Best regards

Hi Giang, I fixed the link, appreciate it.