[fancy_header3 variation=”slategrey”]IETF Draft – BGP-signaled end-system IP/VPNs Contrail[/fancy_header3]

There is a new IETF Draft – BGP-signaled end-system IP/VPNs draft-marques-l3vpn-end-system submitted for review. It is quite the whose who on the working group of traditional networking vanguard companies like Cisco, Juniper, Infinera, AT&T labs, Verizon and a new comer to the race Contrail. Contrail is an SDN startup with series A funding at $10Mil. There are some of the best out there heading that way such as Kireeti Kompella from Juniper, Ankur Singla from Aruba (I had Arista typed edited (Oct 10- Thanks Ankur!), Pedro Marques from Google and Harshad Nakil from Cisco. Rumor is this is a spin-in for Juniper. If you want to go straight to the draft and skip any of this post here is the link.

What jumps out on the surface the most is this draft is actually talking to a coherent control plane. I have changed my mind on what it is going on in the draft proposal about 10 times over the past 3 days that I have looked at it so it will probably change again and I am sure I missing about a dozen nuances. It is truly amazing to see how BGP has evolved through extensions to be as relevant today and in the future as ever.

The problem is at the edge wether orchestration, policy application or scale and this looks to address that issue in software on the next-gen edge which is the hypervisor. The elegance is that instead of saying we are agnostic to what is going on in the underlying network substrate, this incorporates the substrate via widely deployed protocols such as BGP/VPNs today. There is a lot going on in this draft, ALOT. If you aren’t quite clear or rusty on how network virtualization works via BGP/VPN/MPLS, I higly recommend this Lecture from Prof. Karandikar to prime or refresh the mem alloc, he gives a fantastic lecture.

[fancy_header3 variation=”slategrey”]draft-marques-l3vpn-end-system[/fancy_header3]

[pullquote3 align=”center” variation=”steelblue”]This document describes how the control plane specified by BGP/MPLS IP VPNs [RFC4364] can be used to provide a network virtualization solution for end-systems that meets the requirements of large scale data-centers. It specifies how the control and forwarding functions of a Provide Edge (PE) device described in [RFC4364] can be separated such that the forwarding function can be implemented in end-systems themselves. The solution is applicable to any encapsulation that can deliver packets across an IP network as a tunneled IP datagram plus a 20-bit label.[/pullquote3]

[fancy_header3 variation=”slategrey”]Not Perfect but BGP and VPNs Scale[/fancy_header3]

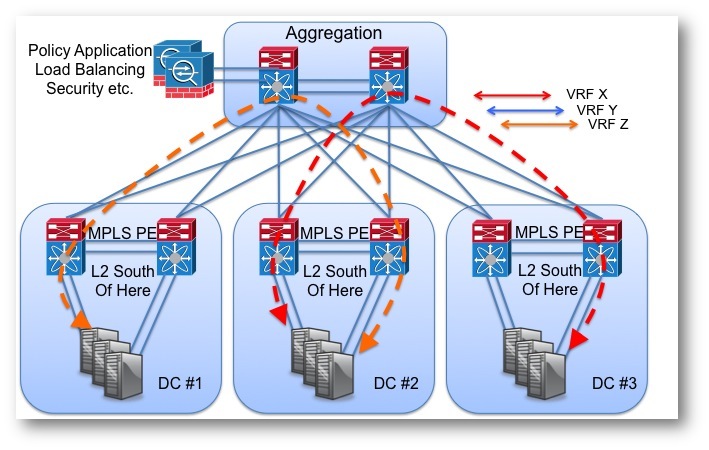

Before we dive into the draft, let’s take a peak at where we are today from my pov at least. Until policy is pushed to the edge into software, which is nonexistent until it can be orchestrated, there is this central pile of gear applying it somewhere in the network. That can be distributed but now you are still left with a management problem of managing distributed systems with no tools that interop. Not to bore anyone with story time, but I see potential for the policy application problem that is still present today. Last year when designing a data center we leveraged BGP MPLS VPN PEs like we do with about everything anymore, for the requirement of multi-tenancy across three data centers. We have deployed BGP/VPNs in our enterprise for the last 5 years or so to support lots of different business units and compliance such as PCI and HIPAA.

The ability to have scale, multi-tencny and operational coherency rule out many solutions in the bag of protocols we cobble in todays nework toolkit. The option I fall back too about every time are MPLS/BGP/VPNs RFC4364. Vlans, while a component of an MPLS/VPN solution in a data center is only usable south of the PE nodes. Even there you begin to open up risk. Anytime you plug in a layer 2 looped topology you begin relying on Spanning-tree or Multi-Link Aggregation Groups MPLAG(VPC, Virtual Chassis, etc). While those work there are strengths and weaknesses, a couple would be operational complexity introduced and single logical points of failure (e.g. split brain).

Figure 1. No problems here but what happens if one VRF needs to import another VRF?

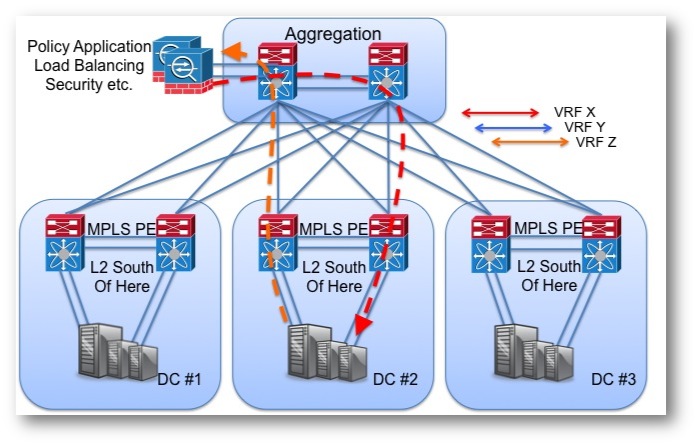

Figure 2. Hair pin to apply policy in the distribution/aggregation/spine pick your architecture.

[fancy_header3 variation=”slategrey”]The Policy Application Problem[/fancy_header3]

[fancy_header3 variation=”slategrey”]Not Perfect but BGP and VPNs Scale[/fancy_header3]

The purpose of a “closed user-group” is to provide path isolation between different security zones, tenants, services whatever the use case may be but the net is to push multiple containers over the same physical network. Forget cloud provider data centers for a second and just think of a generic enterprise data center, most of those have at least two security zones, a frontend (DMZ etc) and a backend. The past couple of years we have been talking about 2-tier Clos, Spine leaf, collapsed or your favorite term to maximize for east west traffic. As the east west traffic has undoubtedly increased from 95% North to close %75 East West what does not fit into this design is policy application. If your A and B endpoints live on two different security zones your east west gets punted north to a central point to have policy applied between zones. Distributing policy to the edge in the hypervisor vSwitch has become popular but scale and operational consistency for average shops seems a bit elusive from what I have seen so far. Something has to orchestrate resources well beyond just networking resource pools.

[fancy_header3 variation=”slategrey”]draft-marques-l3vpn-end-system[/fancy_header3]

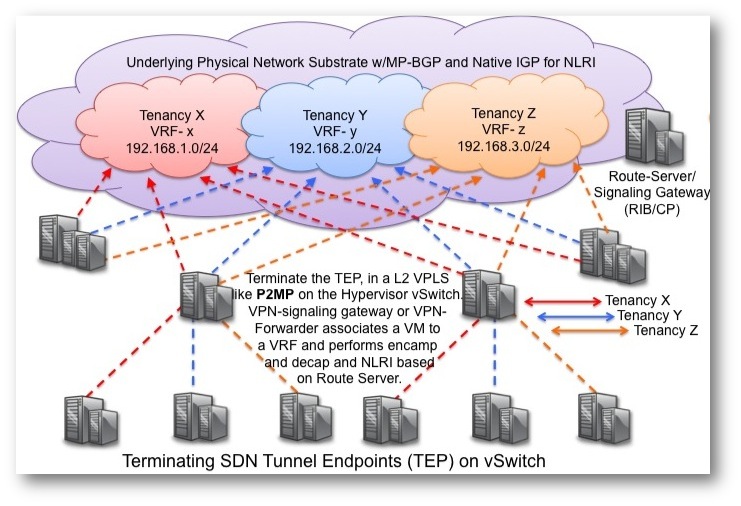

This Proposal describes extending the tried and true MPLS/VPN framework and essentially proposes extending the provider edge (PE) or “VPN Forwarder” to the OS/Hypervisor. That would be the x86 physical server hosting VM instances. If VPN Forwarding cannot be implemented on the end system (aka Hypervisor) “it may be implemented by an externalsystem, typically located as close as possible to the end-system itself” (Top of Rack, another system etc).

[pullquote3 align=”center” variation=”steelblue”]End-System Route Server A software application that implements the control plane functionality of a BGP IP VPN PE device and a XMPP server that interacts with VPN Forwarders.

Virtual Interface An interface in an end-system that is used by a virtual machine or by applications. It performs the role of a CE interface in a BGP IP VPN network.

VPN Forwarder The forwarding component of a BGP IP VPN PE device. This functionality may be co-located with the virtual interface or implemented by an external device.[/pullquote3]

Required functions by a VPN forwarder:

[fancy_numbers variation=”slategrey”]

- Support for multiple “Virtual Routing and Forwarding” (VRF) tables.

- VRF route entries map prefixes in the virtual network topology to a next-hop containing a infrastructure IP address and a 20-bit label allocated by the destination Forwarder. The VRF table lookup follows the standard IP lookup (best-match) algorithm.

- Associate an end-system virtual interface with a specific VRF table

- When the the Forwarder is co-located with the end-system, this association is implemented by an internal mechanism. When the Forwarder is external the association is performed using the mac-address of the end-system and a IEEE 802.1Q tag that identifies the virtual interface within the end-system.

- Encapsulate outgoing traffic (end-system to network) according to the result of the VRF lookup.

- Associate incoming packets (network to end-system) to a VRF according to the 20-bit label contained immediately after the GRE header.

[/fancy_numbers]

[fancy_header3 variation=”slategrey”]XMPP Signaling Protocol[/fancy_header3]

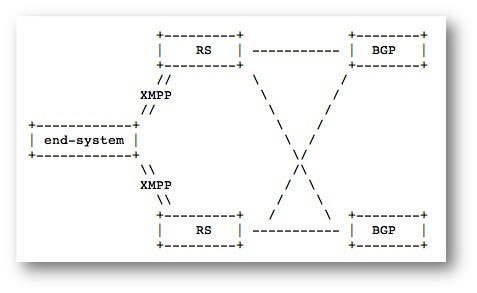

In order for end systems to be aware of what routes are in its VRF based on the policy applied from the router server that meta data needs to be feed in. The protocol proposed is Extensible Messaging and Presence Protocol (XMPP) that sourced itself out of the Jabber project in the late 90’s. It is a client server framework that supports TLS and SASL for crypto. XMPP uses TCP for transport along with an abstraction into HTTP that the client can retrieved and posted via HTTP ‘POST’ and ‘GET’ on 80 and 443.

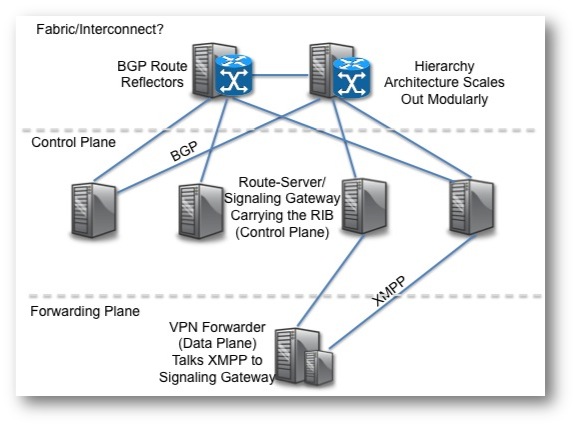

Figure 3. XMPP is the signaling protocol between VPN forwarder (Forwarding Plane) and Signaling Gateway (Control Plane) carrying meta data.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

; html-script: false ] #Example Snippet from the Draft <iq type='set' from='01020304abcd@domain.org' to='network-control.domain.org' id='sub1'> <pubsub xmlns='http://jabber.org/protocol/pubsub'> <subscribe node='vpn-customer-name'/> </pubsub> </iq> |

The request above, instructs the End-System Route Server to start populating the client’s VRF table with any routing information that is available for this VPN. The XMPP node ‘vpn-customer-name’ is assumed to be a collection which is implicitly created by the End-System Route Server. Creation of a virtual interface may precede any IP address becoming active on the interface, as it is the case with VM instantiation.

[pullquote3 align=”center” variation=”steelblue”]When an End-System Route Server receives a request to create or modify a VPN route it SHALL generate a BGP VPN route advertisement with the corresponding information.

It is assumed that the End-System Route Servers have information regarding the mapping between end-system tuple (‘system-id’, ‘vpn-customer-names’) and BGP Route Targets used to import and export information from the associated VRFs. This mapping is known via an out-of-band mechanism not specified in this document.

In this solution, the Host OS/Hypervisor in the end-system must participate in the virtual network service. Given an end-system with multiple virtual interfaces, these virtual interfaces must be mapped onto the network by the guest OS such that applications on one virtual interface are not allowed to impersonate another virtual interface.

When VPN forwarder functionality is implemented by the Host OS/ Hypervisor, intermediate systems in the network do not require any knowledge of the virtual network topology. This can simplify the design and operation of the physical network.[/pullquote3]

Figure 4. My interpretation of what this draft would look like.

[fancy_header3 variation=”slategrey”]What makes this SDN Overlay Solution unique?[/fancy_header3]

We have seen quite a few data plane solutions come through. Those L2 excapsulations like VXLan, NV-GRE (Ivan does a much better job explaining the nuances than I). Those encapsulations are a part of the puzzle but do not address the control plane. Recently Broadcom anounced support for NV-GRE and VXLan in the very popular StrataXGS Trident II chipset which is the staple of almost all merchant silicon driven chipsets (Greg’s explanation is a must read if you haven’t). I for one am not chomping at the bit to try and gain visibility of L2 overlays in the data center spines, Ps, aggregation etc. That feels like a re-invention of the wheel. If we are going that far why not start over.

This draft is unique in that it actually looks to standardize the control and data/forwarding plane components. It begins to touch on management aspects or at least a centralized point in the form of route servers as a gateway to policy application if you stuck an API on it. Prior to this draft, all I have seen from the emerging data center SDN arena, the control plane is either nothing, weak answers (multicast) or closed proprietary special sauce solutions such as Nicira (NVP) and IBM (DOVE). The alternative is OpenFlow, but there is a void in northbound applications (too early BigSwitch appears very close) that would be required to manage controllers so far. Again what we do in the data center should begin looking very different from Enterprise and Service Provider. The one thing other than IP that binds the three verticals together is the management promise. Proprietary protcols that create logical instances (MLAG, Multilink Aggregation Groups) or stacking either virtual of physical creating non-blocking fabrics are all wrapped in proprietary hardware, software and APIs. Interop is DOA.

[fancy_header3 variation=”slategrey”]Control Plane Components[/fancy_header3]

The heart of the control plane is the Signaling Gateway (Route Server). The VPN forwarder (Hypervisor/End-System) is the forwarding plane. The signaling gateway is an application that sits on a server and interacts with the VPN forwarder via the XMPP signaling protocol described earlier. That passes meta data to identify who and what VRFs you belong to (route target) and interact with. Signalling requests from forwarder to route server via an unspecified out of band connection with the XMPP where upon arrival the signaling gateway consults the RT-Constraint Routing Information Base (RIB). RFC4364 is specifically referenced for how BGP speakers exchange route target updates.

Figure 5. How it seems to me now, today, likely to change 15 times a day, shiny objects in the mirror may seem shinier.

[pullquote3 align=”center” variation=”steelblue”]The functionality present in the BGP IP VPN control plane addresses the requirements specified in the previous section. Specifically, it supports multiple potentially overlapping “groups”, regular or “hub and spoke” topologies and the scaling characteristics necessary.

The BGP IP VPN control plane supports not only the definition of “closed user-groups” (VPNs in its terminology) but also the propagation of inter-VPN traffic policies [RFC5575]. An application of that mechanism to “end-system” VPNs is presented in [I-D.marques-sdnp-flow-spec].

Note that the signaling protocol itself is rather agnostic of the encapsulation used on the wire as long as this encapsulation has the ability to carry a 20 bit label.

Whenever the End-System Route Server receives an XMPP subscription request, it SHALL consult its RT-Constraint Routing Information Base (RIB). If the Route Server does not already have locally originated route for the route target the corresponds to the vpn-name present in the request, it SHALL create one and generate the corresponding BGP route advertisement. This route advertisement should only be withdrawn when there are no more downstream XMPP clients subscribed to the VPN.

The 32bit route version number defined in the XML schema is advertised into BGP as an Extended community with type TBD.

End-System Route Servers SHOULD automatically assign a BGP route distinguisher per VPN routing table.[/pullquote3]

[fancy_header3 variation=”slategrey”]Summary[/fancy_header3]

This looks good for a data center solution. I am pretty bias when it comes to the opportunity of working with legacy gear through BGP IP VPNs which presents a migration path. It is a holistic solution with a blueprint rooted in an open standard based framework rather than just a data plane only solution. Still lingering is the policy application problem, that is a software problem. How does east west traffic within a hypervisor know when and when not to import a host route from one VRF to another without the packet ever hitting the physical substrate and pointing north south. Can that even be done efficiently using L2-4 headers only or will that require deep packet inspection?

There is still plenty of room for differentiation in both hardware and software. The software abstraction and orchestration of both the forwarding and control plane components are still missing. That presents a good chunk of revenue and it should be able to be agnostic and decoupled from the substrate if proper primitives and APIs are present and standardized. If the VRF imports can be part of the ecosystem and be instantiated into the RIB in the signaling gateway as part of the overall data center orchestration then this becomes a compelling framework. Getting the functionality of east west policy application plus a control plane all in a standardized manner is makes it the most interesting thing I read this week. The landscape is still seems far to unstable to start expecting anything north in the form of mature orchestration, but you need a good foundation before the house if you want it to last. I expect big things from Contrail and whoever buys them in the next (X) months. This is a data center solution, not a overall solution. Last time I looked I couldn’t find any Hypervisors or vSwitch in communications closets and Gigapops. These are pieces to a puzzle that are do not represent radical change but smart change.

If BGP and MPLS are all that we needed and we were just composing the legos wrong, I will be surprised. This doesn’t solve application classification in a simple enough manner for me that a non-networking developer would be able to understand without a decade in networking (I can barely figure it out fwiw). Juniper has become religious over technology much like Cisco is over ASICs. This clearly appears to have their fingerprints of IP, BGP and MPLS (Father, Son and Holy Ghost).

I’ve always wondered at the rediculous complexity networkers introduce to achieve multi-tenancy that really only provides security through obscurity.

The only secure solution for multi-tenancy is IPsec tranport-mode policies applied on the tenant servers (VMs these days). All relevant server OS have supported this for decades.

I keep finding your blog in searches… black hat SEO? Haha… seriously, great article, but I think I’ll need to go back and read it a few more times. Since this blog, we obviously know about the vRouter, but I’d love to see your updated take.

Ha, thanks John! Its embarrassing when I hit me own site for searches…

Cheers bro,

-Brent

I hardly leave a response, but I browsed a few of the responses on this page

IETF Draft – BGP-signaled end-system IP/VPNs Contrail.

I do have 2 questions for you if it’s allright.

Coulod it be simply mee or do a few of these comments look as if they are left by brain dead folks?

😛 And, if you are writing on additional sites, I’d like to keep up with you.

Would you make a list of every one of all your social networking pages like your linkedin profile, Facebook page or twitter feed?