[colored_box variation=”steelblue”]

- Installing OpenStack Grizzly with DevStack : Here is an updated Grizzly DevStack tutorial since Folsom is coming to an end.

[/colored_box]

Update: My latest installation document for Folsom can be found here. It’s too tough to try and keep up with debugging installers myself so I am just using DevStack in that tutorial. Thanks!

Here is a two-parter on how to bring up an OpenStack build on VirtualBox. A screencast is accompanying the two sections. I live in networks, so if you see me do so all bad practice in scripting and systems configs it should be expected. Live demos can get squirrely, especially herding Unicorns are never very easy, so whatever is not in the video is written in the steps below (mostly..).

Keep in mind this is just VMs on a laptop. OpenStack Essex is the latest release in the 6-month software lifecycle. It is essentially a software abstraction for orchestrating as a customer, not only your customer prem data center, but also your peak/elastic/hybrid/private/public bursting to the blabbity blah cloud. As a service provider this is the bread and butter of multitenant orchestration, automation provisioning etc. Just as in networking we exchange information over pre-defined sets of protocols to pass rumors to one another, this is the same problems in the rest of the data center today. The use of APIs or gateways to data we can create a single infrastructure abstraction one layer up the stack as opposed to a bunch of components loosely coupled together.

The current trends are collaborative, inclusive, and community driven initiatives, and the overarching economic incentive to the vendors is as much defensive. It is the consumers, collectively demanding inovation, as opposed to the vendors serving stockholders. Granted, many of the “consumers” are also vendors, but it is a much more accountable process than ever witnessed in the infancy of the first century of post microprocessor era. My favorite people are those who are those unwilling to recognize the fundamental paradigm shift we are going through. It is probably very similar to the first 2 quarters of a recession, some people realize what is happening but the majority either cannot or will not be able to accept the trending until their portfolio is in shambles. On to the video and post!

- Part 1 is a scripted install and how to spin up a couple of compute nodes.

- Part 2 is what do I do with it after I have some nodes provisioned. It was pretty late at this point in the recording and I was half asleep. Thats my disclaimer for most things in life 🙂

[youtube height=”480″ width=”640″]http://www.youtube.com/watch?v=K1fgBOXpAkU&hd=1[/youtube]

Part 1 video of the install and provisioning:

Nova:

Nova is the guts of the Orchestration. Scheduling, messaging, API, compute, object-store etc are vital. Essex Notes:

• nova-api accepts and responds to end user compute and volume API calls. It supports OpenStack API, Amazon’s EC2 API and a special Admin API (for privileged users to perform administrative actions). It also initiates most of the orchestration activities (such as running an instance) as well as enforces some policy (mostly quota checks). In the Essex release, nova-api has been modularized, allowing for implementers to run only specific APIs.

• The nova-compute process is primarily a worker daemon that creates and terminates virtual machine instances via hypervisor’s APIs (XenAPI for XenServer/XCP, libvirt for KVM or QEMU, VMwareAPI for VMware, etc.). The process by which it does so is fairly complex but the basics are simple: accept actions from the queue and then perform a series of system commands (like launching a KVM instance) to carry them out while updating state in the database.

• nova-volume manages the creation, attaching and detaching of persistent volumes to compute instances (similar functionality to Amazon’s Elastic Block Storage). It can use volumes from a variety of providers such as iSCSI or Rados Block Device in Ceph.

• The nova-network worker daemon is very similar to nova-compute and nova-volume. It accepts networking tasks from the queue and then performs tasks to manipulate the network (such as setting up bridging interfaces or changing iptables rules).

• The nova-schedule process is conceptually the simplest piece of code in OpenStack Nova: take a virtual machine instance request from the queue and determines where it should run (specifically, which compute server host it should run on).

• The queue provides a central hub for passing messages between daemons. This is usually implemented with RabbitMQ today, but could be any AMPQ message queue (such as Apache Qpid).

• The SQL database stores most of the build-time and run-time state for a cloud infrastructure. This includes the instance types that are available for use, instances in use, networks available and projects. Theoretically, OpenStack Nova can support any database supported by SQL-Alchemy but the only databases currently being widely used are sqlite3 (only appropriate for test and development work), MySQL and PostgreSQL.

Hypervisor:

For this demo we are using Qemu a simple hypervisor. Below is a list of the supported hypervisors with links to a relevant web site for configuration and use:

• KVM – Kernel-based Virtual Machine. The virtual disk formats that it supports it inherits from QEMU since it uses a modified QEMU program to launch the virtual machine. The supported formats include raw images, the qcow2, and VMware formats.

• LXC – Linux Containers (through libvirt), use to run Linux-based virtual machines.

• QEMU – Quick EMUlator, generally only used for development purposes.

• UML – User Mode Linux, generally only used for development purposes.

• VMWare ESX/ESXi 4.1 update 1, runs VMWare-based Linux and Windows images through a connection with the ESX server.

• Xen – XenServer 5.5, Xen Cloud Platform (XCP), use to run Linux or Windows virtual machines. You must install the nova-compute service on DomU.

Images: The script for this install are Ubuntu Oneiric 11.10 from ubuntu.com. The default repo is:

http://docs.openstack.org/trunk/openstack-compute/admin/content/starting-images.html

The images use the EC2 repository for distributions and it seems to get out of sync. I switch to archive.ubuntu.com and will cover it in the screencast and steps in the post by editing the /etc/repo.

Storage: For this demo we are just using the default local filesystem for storage. Swift appears to be lined up to replace this module from the Nova core.

• OpenStack Object Storage – OpenStack Object Storage is the highly-available object storage project in OpenStack.

• Filesystem – The default backend that OpenStack Image Service uses to store virtual machine images is the filesystem backend. This simple backend writes image files to the local filesystem.

• S3 – This backend allows OpenStack Image Service to store virtual machine images in Amazon’s S3 service.

• HTTP – OpenStack Image Service can read virtual machine images that are available via HTTP somewhere on the Internet. This store is readonly.

Network– Nova-Network is the current module for networking in the Essex build for Layer2 bridging today. Quantum is the future component, which appears to be getting rolled officially in the Fall 2012, “Folsom” release. The current networking components are probably the least mature of the modules, since it is relying on the bridging kernel module in Linux.

There has been a great deal of code development from many of the vSwitch developers (e.g. Cisco, Nicira, OVS), to have API integration into Quantum. Data Center software switches will be the crux of enabling cloud mobility via OpenStack especially at hyper-scale. Network abstraction allows for programmatic approaches to automation and provisioning. I did not spend much time digging under the hood of Nova-network, since it will likely be replaced by Quantum in the next SW cycle this fall.

Demo interfaces:

• Eth0 – NAT under VirtualBox. Leave it DHCP.

• Eth1 – Setup as a Host only. Needs to be done globally in VirtualBox the first time before you can add it to an instance.

• Eth2 – Compute nodes live here. Vlan100 will be bound to this interface from the install script and it will appear as br100 from ifconfig.

Nova Network commands and default logs:

cat /var/lib/nova/networks/nova-br100.conf – bridging config

/var/log/nova/nova-network.log – logs

cat /etc/init/nova-network.conf – config load

/etc/init.d/nova-network – upstart / startup config

Currently, Nova supports three kinds of networks, implemented in three “Network Manager” types respectively: Flat Network Manager, Flat DHCP Network Manager, and VLAN Network Manager.

Installation:

Install VirtualBox. I am doing this on a MacBook. You will have three interfaces as listed above. Eth0 will be left NATing in VirtualBox, Eth1 (virbr0 in Vbox) is host only 172.16.0.0/16 and Eth2 (virbr1 in Vbox) is 10.0.0.0/8.

I did a 15GB drive and used 4 cores and 3GB if memory. I have done it with one core and 1.5Gb of memory and didn’t really notice much of a difference.

I used the beta2 Ubuntu 12.04 LTS (Precise Pangolin) daily build.

Once booted uptionally update your OS and su root passwd. No flack I don’t feel like sudoing every command in dev.

#sudo passwd root

#su

#apt-get update; apt-get upgrade

I also recommend installing the guest addons found in the VirtualBox ‘Devices’ menu. This will optimize perf depending on the box you are doing this on.

I tend to gut networking managers on a fresh install, you could just stop the service if you would rather.

#apt-get purge network-manager

Edit your networking to reflect what is below.

#nano /etc/network/interfaces

(beginning)

#Primary interface NAT interface

auto eth0

iface eth0 inet dhcp

#public interface – The API village

auto eth1

iface eth1 inet static

address 172.16.0.1

netmask 255.255.0.0

network 172.16.0.0

broadcast 172.16.255.255

#Private Vlan Land of Compute Nodes

auto eth2

iface eth2 inet manual

up ifconfig eth2 up

(end)

Restart the networking service.

#/etc/init.d/networking restart

Test Inet connectivity. ping google.com or some Inet host to make sure you have good connectivity.

Pull down the installer script. Uksysadmin did this script and it worked very well. Great thing about a script (especially for a network knucklhead like myself) is its very easy to parse through and change anything you want to customize for yourself yourself. There are a bunch out there at the moment most break quickly. This one works very well and beats compiling each component from scratch just for testing.

More installer links.

http://docs.openstack.org/bexar/openstack-compute/admin/content/ch03s02.html

http://devstack.org/

Install git from the repos:

#apt-get install git

Clone the installer:

#git clone https://github.com/uksysadmin/OpenStackInstaller.git

#cd OpenStackInstaller

#git checkout essex

Run the installer, this will add the network interfaces, DHP and addresses you need automagically:

#./OSinstall.sh -F 172.16.1.0/24 -f 10.1.0.0/16 -s 512 -t demo -v qemu

Edit nova.conf and add the ‘my_ip’ value to the end of nova.conf:

#nano /etc/nova/nova.conf

#–my_ip=172.16.0.1

Wordpress mangles ‘-‘ There are two of the before ‘my’ e.g. “- – my_ip”

You should see the following processes running at this point. If not start scouring logs or respawn with

/etc/init.d/ restart.

# ps -ea | grep glance

1345 ? 00:00:00 glance-api

1346 ? 00:00:00 glance-registry

# ps -ea | grep nova

1340 ? 00:00:21 nova-compute

1341 ? 00:00:16 nova-network

1342 ? 00:00:03 nova-api

1343 ? 00:00:10 nova-scheduler

1344 ? 00:00:00 nova-objectstor

In Ubuntu 12.04, the database tables are under version control, fix it with the following on a new install to prevent the Image service from breaking possible upgrades:

#glance-manage version_control 0

#glance-manage db_sync

Restart Glance just in case, probably not nessecary:

#/etc/init.d/glance-api restart

#/etc/init.d/glance-registry restart

Download the image. This portion, was a bit hit and miss for me. A circuit blew from the pile of gear in my ghettofab lab and clipped the download during one. If you don’t see “saved [197289496/197289496]” and see “saved []” with an empty vaue the image will not show in the Nova dashboard.

#./upload_ubuntu.sh -a admin -p openstack -t demo -C 172.16.0.1

If you need to have a couple of attempts you may need to delete the image download in /tmp/__upload/

# rm /tmp/__upload/* (**only if you get ubuntu 11.10 i386 now available in Glance (empty) or nothing in your images menu in Dashboard later in the install**).

Saving to: /tmp/__upload/ubuntu-11.10-server-cloudimg-i386.tar.gz'

100%[======================================>] 197,289,496 610K/s in 5m 31s

2012-04-20 01:49:56 (581 KB/s) - /tmp/__upload/ubuntu-11.10-server-cloudimg-i386.tar.gz’ saved [197289496/197289496]

ubuntu 11.10 i386 now available in Glance (d19924ae-56e7-41bc-9f02-bbc8d7265cdf)

Now time for clickety click time:

Log into the dashboard at http://172.16.0.1

Username: demo

Password: openstack

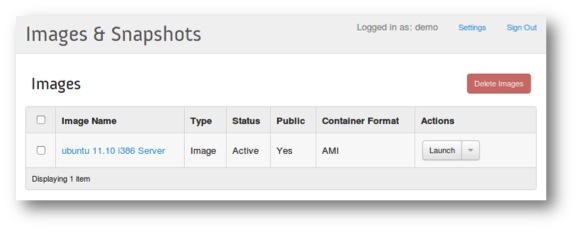

Make sure you see “ubuntu 11.10 i386 Server” under Project -> Images and Snapshot. If you don’t troubleshoot the image upload and Glance install/config.

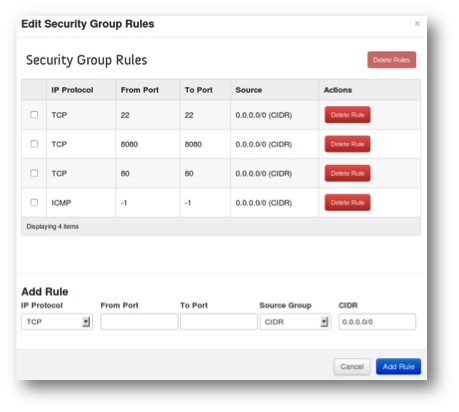

Under projects –Demo – Go to Access & Security

And create a keypair and save the key

somewhere you can get to it later.

Edit the default rules with:

IP Protocol From Port To Port Source

TCP 22 22 0.0.0.0/0 (CIDR)

ICMP -1 -1 0.0.0.0/0 (CIDR)

TCP 8080 8080 0.0.0.0/0 (CIDR)

TCP 80 80 0.0.0.0/0 (CIDR)

Time to launch an image!

Under project -> Images & Snapshot -> click launch ->

name the instance, choose the default security policy.

Also, choose the keypair you created from the dropdown.

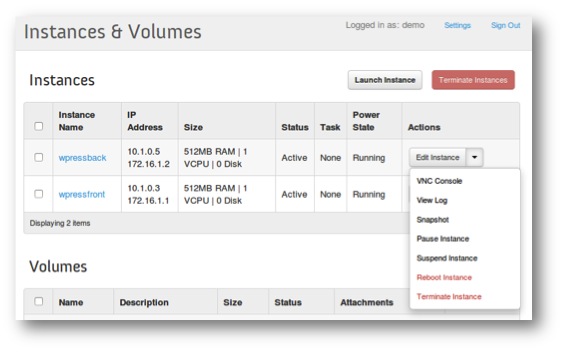

After you launch your instances you should see them provisioned and available in Dashboard.

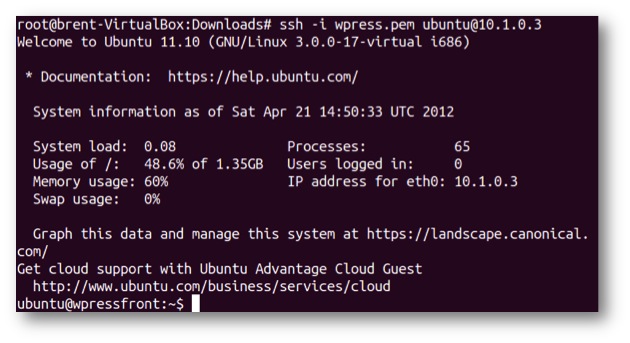

If that all looks like above then lets connect to the hosts using the key we saved earlier (get the IP from the dashboard under Instances & Volumes). Use Ubuntu as the login ID. You can get the IP from the Dashboard or dump arp tables from the new node that was spun up.

cd into the directory with your key.

#ssh -i demo.pem ubuntu@10.1.0.x

Now we have a self-provisioned abstracted compute node! Soooo what do we do with this thing? I labbed a modified example from the admin guide at OpenStack.org, a WordPress frontend and backend server. I am breaking this into a two part for anyone who doesn’t like scrolling down. Part 2 Is an example of what we can do with the compute nodes once we have them provisioned.

Hi Brent,

Do you have any manual or clue how add a new compute node in this openstack?

Thanks a lot,

Hey pal, check that script. I think you can pass an argument for a compute node. I am going to try and tweak it tonight with a build for two nics. Email me if you want to collab on it. I will post results either way. Want to get a working script or a box with two nics and clustered with compute nodes. Cya, Brent

Sent from my iPhone

Hi,

Is it possible to test VM migration under this setup?

hi Brent! thank you for the tuto!

so i have an issue concerning the instances of the VM!

in my case i cant access to my VMs by ssh or VNC

i haven’t any error in the log can you help me please!

In appearance all goes well!

I’m using this tutorial :http://networkstatic.net/2012/05/openstack-essex-and-quantum-installation-using-openvswitch-from-scratch/

i don’t understand this problem!

Best Regards

Bahroun Nesrine

Hi nesrine. That can be tricky. Are you trying to get to them from the Openstack controller host or from another machine outside of that? What you can do to attach off the host is add a floating address that is routable. assign that to a host and it should be usable out of the floating public pool. Let me know what you see with that and i will try and get back reasonably quick.

hi Brent!

until now i’m using all-in one infrastructure, but i can to add a floating address for VMs but when i ping them the network is unreachable!

thank you in advance

Hi Brent

Thanks for this valuable tutorial

but i have a question: can i install openstack the way you did with only one ethernet card? i mean is it possible to have 2 network interfaces ?

in case it’s, how should i proceed with the configuration?

Hi

Thank you very much. You just saved 2-3 weeks of my life.

I installed OpenStack all according to your video on Ubuntu 12.04 server 64bit. However i am receiving some errors

When i click “Project” i get this error:

….

Exception Value:

string indices must be integers, not str

Exception Location: /usr/lib/python2.7/dist-packages/novaclient/base.py in _get, line 151

….

When i click “Flavors” i get a red banner:

Error: Unable to get flavor list: string indices must be integers, not str.

Any help will be highly appreciated.

Hello,

I installed OS following your guide (very very thanks).

But now i can’t use the usual commands as specified in the open stack official guide (for instance #glance index #nova list …) why? it says something like “not contain endpoint”

Hmm I havent tried that in a while. Here is the github for uksysadmin who wrote the nice installer. https://github.com/uksysadmin/OpenStackInstaller/tree/essex

Another option would be to try building them with Hastexcos script that he was nice enough to put together for the community.

I linked to it here http://networkstatic.net/2012/05/openstack-essex-installation-and-configuration-screencast-from-scratch/

$chmod +x endpoints.sh

./endpoints.sh -p openstack -R RegionOne (-p is your password and RegionOne is a generic region)

Hi Danielle, Just following up if you got fixed up? I can test the installer on a VM real quick if you are still stumped.

cheers.

Hi Brent,

Thank you for this posting the content is great. I have a troubleshooting question regarding the IP Address assignment during instance startup.

When attempt startup it fails with the Status=”Error” and only one IP address assigned (10..1.0.5) instead of the expected two.

Do you have any troubleshooting suggestions or know where to find the error logs for OpenStack installation?

Thank,

Paul

After running the instanceces,on using the following command

ssh -i key.pem ubuntu@10.1.0.3

this error is generated…

ssh: connect to host 10.1.0.3 port 22: No route to host

kindly suggest a solution.

thanks

Adwait

i also have that problem

After running the instanceces,on using the following command

ssh -i key.pem ubuntu@10.1.0.3

this error is generated…

ssh: connect to host 10.1.0.3 port 22: No route to host

kindly suggest a solution.

thanks

ron

Hi Ron, I will try and give it a run this week and see whats up. In the meantime have your tried the RackSpace build? Wanted a node to test with their public cloud but some twit lady blew me off but I will say I like the installer, very friendly. Let me know if that works out for you and I will try and revisit that when I get a breather this week. Thanks!

http://networkstatic.net/rackspace-openstack-installation-on-a-kvm-vm/

Hi, I have the same problem, I cannot ssh or ping the instances from the terminal inside the ubuntu. Did you find any solutions?

Thanks!

Hi,

If have done this through my windows host. I think you are running Ubuntu Server with Ubuntu. I’m just running VirtualBox off my host computer. So not sure what to do with ssh to the server the key. Or is ti still the same?

Is it possible to do files onto this instance, you know make a file and have my host computer or another host computer via a switch up and down files to and from the running server on virtualbox?

Sorry if I am not make sense, I’m not entirely sure myself.

Many thanks,

Daniel

Hi Daniel, the only problem with using virtual box is it does not support nested hypervisor support. In linux you can check for this on the guest VM by running:

grep –color vmx /proc/cpuinfo

More information here:

http://networkstatic.net/openstack-quantum-devstack-on-a-laptop-with-vmware-fusion/

Vmware workstation for Windows does support nest hypervisor support if you were using your laptop or desktop for learning OpenStack. Check this post out for installing Grizzly w/ Devstack:

http://networkstatic.net/installing-openstack-grizzly-with-devstack/

I keep getting now available in Glance () ==> Empty

Where is the credentials parts , all the command needs the environment to be set up !!

Dont use Essex 🙂 Its really old in the OpenStack lifecycle.

Installers:

-Juju

https://juju.ubuntu.com/get-started/openstack/

-DevStack

http://networkstatic.net/installing-openstack-grizzly-with-devstack/

-RackSpace OpenStack installer

http://www.rackspace.com/cloud/private/openstack_software/